Background and Objectives: Collection of feedback regarding medical student clinical experiences for formative or summative purposes remains a challenge across clinical settings. The purpose of this study was to determine whether the use of a quick response (QR) code-linked online feedback form improves the frequency and efficiency of rater feedback.

Methods: In 2016, we compared paper-based feedback forms, an online feedback form, and a QR code-linked online feedback form at 15 family medicine clerkship sites across the United States. Outcome measures included usability, number of feedback submissions per student, number of unique raters providing feedback, and timeliness of feedback provided to the clerkship director.

Results: The feedback method was significantly associated with usability, with QR code scoring the highest, and paper second. Accessing feedback via QR code was associated with the shortest time to prepare feedback. Across four rotations, separate repeated measures analyses of variance showed no effect of feedback system on the number of submissions per student or the number of unique raters.

Conclusions: The results of this study demonstrate that preceptors in the family medicine clerkship rate QR code-linked feedback as a high usability platform. Additionally, this platform resulted in faster form completion than paper or online forms. An overarching finding of this study is that feedback forms must be portable and easily accessible. Potential implementation barriers and the social norm for providing feedback in this manner need to be considered.

An effective clinical learning environment relies heavily on timely, objective feedback.1,2 Yet, collection of feedback regarding medical student clinical experiences for formative or summative purposes remains a challenge across clinical settings.2,3 Residents and faculty often cite other clinical priorities as barriers to completing timely medical student feedback, despite the understanding that increased quantity, quality, and timeliness of feedback leads to improved accuracy and validity in end-of-rotation assessments, learner self-reflection, and future professional growth. Current literature review indicates a common and continued quest for educators to determine an ideal feedback collection method to optimize the learner experience, and to best describe overall performance.4

Medical student feedback collection methods vary widely across facilities and programs. Traditionally, medical students used clinical encounter cards to record and communicate feedback regarding performance on clinical rotations. Written forms have been shown to improve the quantity and timeliness of feedback, but may have other limitations.5-9 For example, written form accountability poses a challenge in some environments, such as when daily feedback forms do not reach the educational supervisor, resulting in limited actionable data at the time of evaluation.

Technological advancements have prompted medical education to transition from paper forms to other novel technologies.10 Recent studies regarding medical student feedback found that the use of electronic surveys improved the quality and quantity of feedback to the students, while also improving the perceived quality of the clerkship.11-13 Among these, the formative value of electronic feedback methods varied, or in some instances, was not measured. One study using quick response (QR) technology in a surgical residency program demonstrated increased longitudinal postprocedure feedback resulting in high feedback quality satisfaction rates.14

Educational resources to enhance learning curricula commonly utilize QR codes, but we are not aware of studies evaluating the utilization of QR codes to improve medical student feedback mechanisms. We created a novel method of collecting daily medical student feedback using QR code technology across multiple clerkship sites. The purpose of this study was first to assess the usability of the innovation, and second, to determine whether the use of a QR code-linked online feedback form improves the frequency and efficiency of preceptor feedback to medical students in the family medicine clerkship.

Pilot Study

The first author developed a QR code intervention to elicit feedback from preceptors about clerkship students within a single-site teaching program. QR codes are first created using a QR code generator website. These QR code generator websites convert uniform resource locators (URLs), or web addresses, text, and phone numbers into a black and white pixelated barcode in the shape of a square. A QR code was generated using the internet address of a program-specific online feedback form. The program provided the clerkship student a laminated card that displayed the QR code, which was then scanned by the preceptor using a free QR reader phone and tablet application after each learning session. The preceptors were encouraged to complete the feedback form at the time verbal feedback was provided to the student. Submission of the completed form automatically populated an online database, which was then immediately available to the clerkship site coordinator to formulate summative feedback. The pilot intervention demonstrated a 98% increase in the number of feedback entries for rotating medical students in the first 4 months of implementation.

Multisite Evaluation

The pilot’s success was presented at the Uniformed Services University of the Health Sciences (USU) Annual Clerkship Site Coordinator Workshop in September 2015, to gauge other site coordinators’ interest in implementing the innovation. The Military Primary Care Research Network (MPCRN) subsequently invited its member family medicine teaching programs to participate in a multisite evaluation of the innovation. The protocol was reviewed by the USU Human Research Protections Program Office and determined to be nonhuman subjects research, conducted for quality improvement purposes.

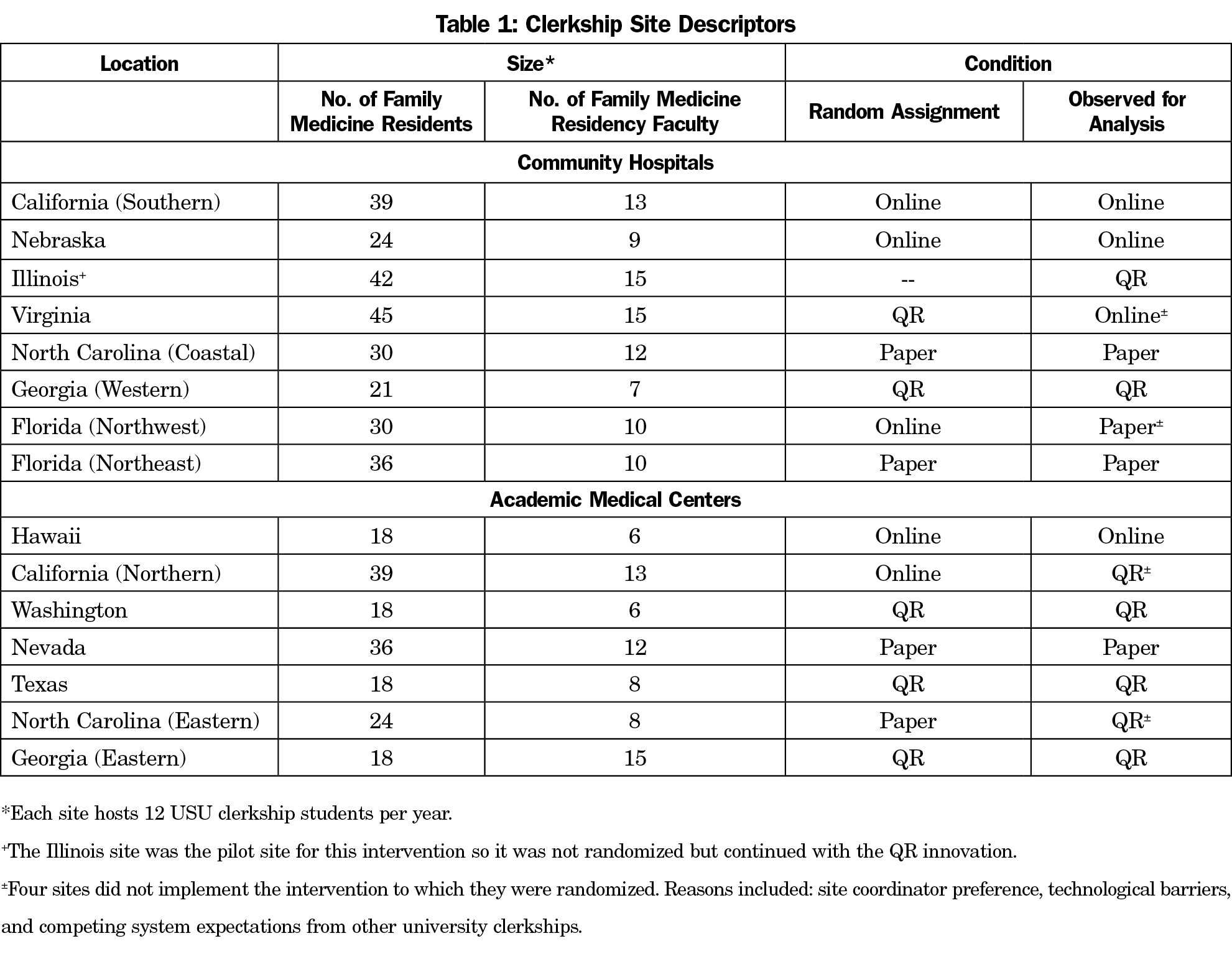

The USU clerkship year begins annually in January. USU clerkship students complete a 6-week family medicine rotation at one of 15 military family medicine residency sites across the United States (Table 1). The QR code innovation was tested concurrently with four rotations of student clerkships at each site from January to July 2016. Following a prospective design, sites were initially randomized into control (paper-based forms), online form only, or QR code-linked online form, using an online randomizer (random.org). Four sites did not implement the intervention to which they were randomized. Reasons included site coordinator preference, technological barriers, and competing system expectations from other university clerkships.

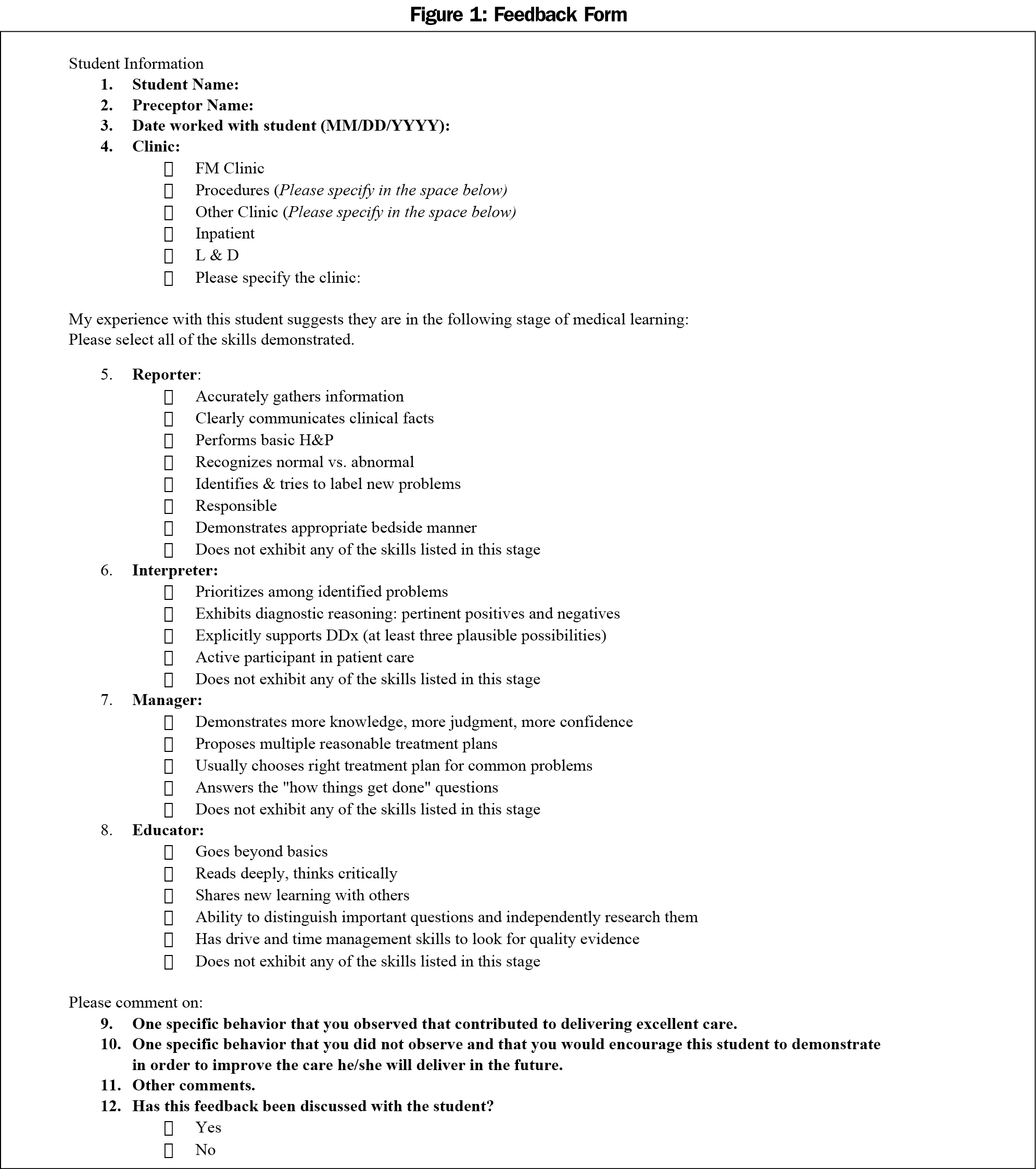

In the paper group, preceptors reported feedback on clerkship students in the form of a physical paper sheet collected by the on-site clerkship coordinator. In the online group, preceptors were given a link to a Survey Monkey feedback form, which included the RIME (Reporter, Interpreter, Manager, Educator) model—a framework for assessing learners in clinical settings (Figure 1).15

In the QR group, a unique QR code was designed for each site, which auto-linked the user to the online Survey Monkey feedback form that could be monitored and collated by the research coordinator. We directed sites to create several laminated 3x3-inch cards depicting the QR code, display them in the clinic and preceptor room, and distribute them to the rotating clerkship students. Faculty and resident preceptors were asked to download a free QR code reader on their mobile device and scan the QR code after their teaching encounter to complete the feedback form.

Evaluation of the innovation included two levels of measurement, first at the individual level and second at the site level. At the individual level, the main outcome measure was the respondents’ perception of the usability of the feedback system. Usability is the ease of use and learnability of a system, regardless of whether it is paper or computer-based. For this evaluation, the validated System Usability Scale (SUS)16 was administered within the pre- and postsurvey. On this scale, scores range from 0 to 100. Previous research established that an SUS score above 68 would be considered above average, and therefore more usable, and anything less than 68 below average. We additionally assessed efficiency of preceptor feedback with a single ordinal-level question that asked the respondent to indicate how much time it took to complete the feedback (Figure 2).

At the site level of measurement, we assessed the frequency and efficiency of preceptor feedback to medical students on the family medicine clerkship. Frequency measures included the number of feedback submissions per student and the number of unique raters providing feedback data. Efficiency was also assessed by recording the timeliness of feedback submitted to the clerkship director.

Individual-level measures were collected with two administrations of an online survey. A baseline survey was disseminated to the 15 sites’ coordinators and program directors in December 2015, prior to any feedback system changes. In this dissemination, we asked the coordinators to send the survey to their sites’ preceptors (faculty and residents). Participants created a unique code to link pre- and posttest data. The survey was open for 2 weeks. The research team sent a reminder email 1 week before the survey closed. After the fourth clerkship rotation in July 2016, a postinnovation survey was distributed to the sites with the same instructions and availability. The research coordinator collected site-level measures from the clerkship site coordinators and clerkship director.

Process Outcomes

The clerkship site coordinator was charged with clerkship site implementation of the study protocol and acted as a gatekeeper for the diffusion of the innovation. Though all the sites were initially randomized into groups, some sites adopted the innovation more readily than others. Adoption was also challenged by limitations in the wireless network of clerkship sites. Since only USU medical students were expected to use the QR system, feedback modalities used by medical students rotating from other medical schools diluted the diffusion. At sites that hosted USU and other medical school clerkship students, the site used QR codes exclusively for USU students while preceptors used paper forms for all other students. This variety of feedback form and modality provided a barrier to adoption. Statistical comparisons were calculated based on the final observed conditions: four paper sites, four online sites, and seven QR code sites.

Individual-Level Outcomes

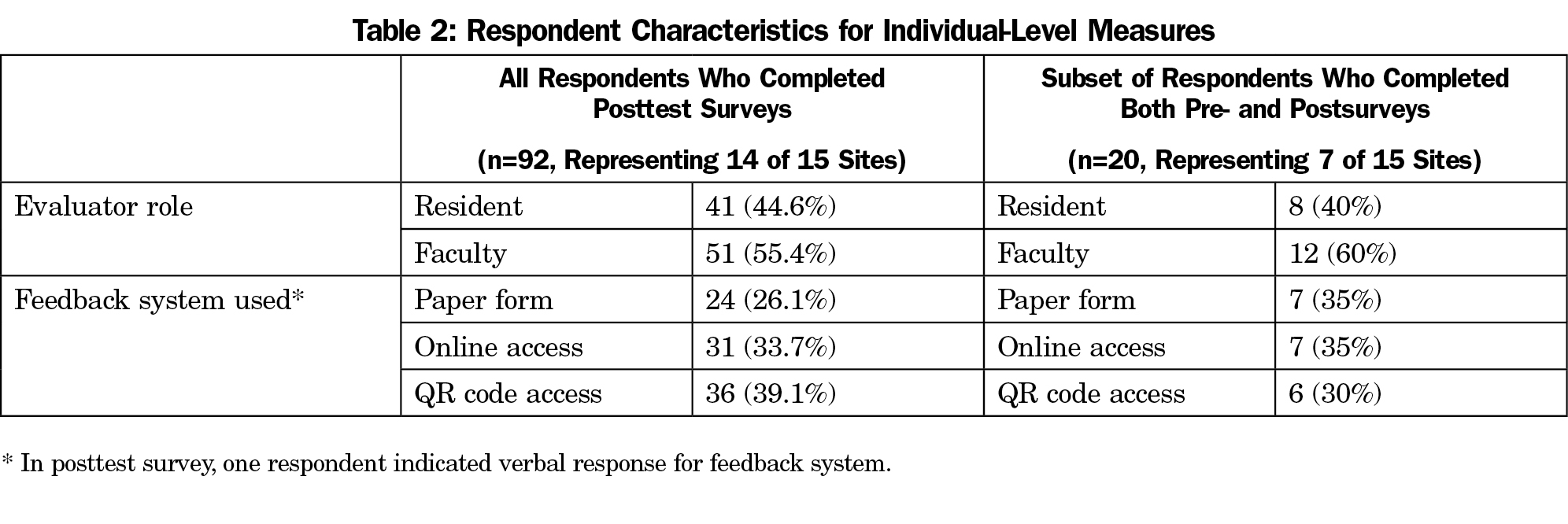

Of an estimated potential 597 preceptors, 92 completed the posttest survey for a 15.41% response rate. Table 2 presents respondent characteristics. Across conditions, self-reported time to complete feedback forms was negatively correlated with usability (Spearman’s rho 92=-.259, P<.05), demonstrating that forms were rated as less usable when they were perceived to take longer to complete. A chi square test demonstrated that completing feedback forms via QR code was associated with the shortest time to complete (χ2 (8, n=91)=19.40, P<.05; Figure 2).

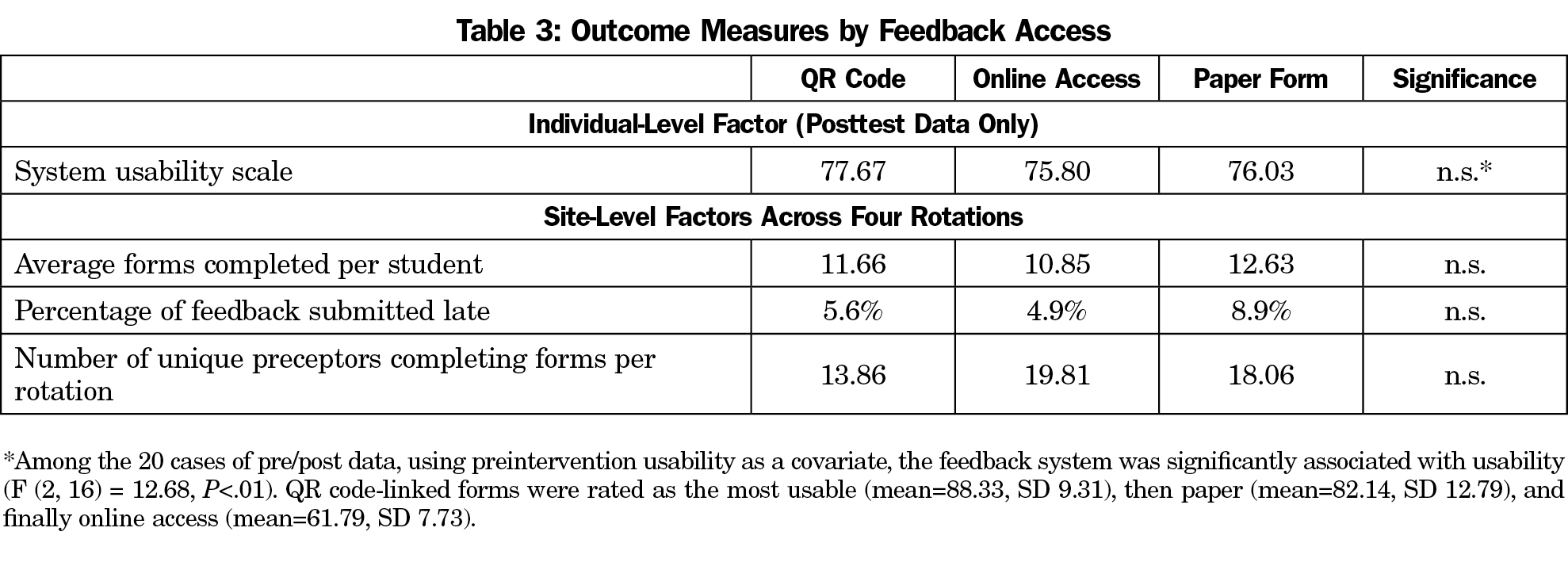

Of 78 pretest and 92 posttest responses, only 20 participants (representing seven of the 15 sites) completed both the pre- and posttest, which were linked by the unique identifiers. In an analysis of covariance testing the observed feedback system on usability, using preintervention usability as a covariate, the feedback system was significantly associated with usability (F (2, 16)=12.68, P<.01). QR code-linked forms were rated as the most usable (mean=88.33, SD 9.31), then paper (mean=82.14, SD 12.79) and finally online access (mean=61.79, SD 7.73). Compared to the established SUS benchmark score of 68, these results indicate the QR code-linked forms and the paper forms have better than average usability; however, the online access forms do not reach that benchmark.

To better interpret this comparison across feedback modalities, we ran an analysis of variance (ANOVA) on the postsurvey alone. This test showed grouped QR code and paper more usable than online access, but it was not statistically significant (Table 3).

Site-Level Outcomes

Across four rotations, separate repeated measures ANOVAs showed no apparent effect of the feedback system on the number of submissions per student or the number of unique raters (Table 3).

These results demonstrate that preceptors in the family medicine clerkship rate QR code-linked online feedback as a high usability platform. Additionally, this platform resulted in faster form completion than paper or online forms. An overarching finding of this study is that feedback forms must be portable and easily accessible. Mobile QR code-linked feedback and paper feedback formats allow preceptors to access and complete forms during the teaching session. Meanwhile, a web-based online system initially requires entering an internet address on a device (portable or fixed) and creating a bookmark, thus generating potential barriers to completion. Feedback using the online system is easier to postpone, and there was no preceptor reminder system to complete feedback.

From the clerkship director perspective, these findings demonstrate that a QR code-linked feedback system is a tool we can provide to site coordinators to collect and provide feedback. We suggest that sites should have the decision power to select the modality that meets their own feasibility criteria. This decision-making process can be facilitated by the clerkship director, but local sites know what system meets their needs and fits their unique limitations and culture.

Local-level opinion leaders play a crucial role in facilitating adoption of new technologies. Implementation of a QR code feedback system is dependent on a social norm shift and reliable technological infrastructure. Users must recognize the relative advantage, compatibility, complexity, trialability, and observability of the innovation before adopting it into practice.17 Although we initially demonstrated the advantage, and provided opportunity for trialability and observability, local existing information systems limited implementation of the QR technology over wireless networks. Discipline leaders argue that health information technology can improve health care systems, yet they also recognize that health innovations have not yet been fully integrated.18

This study reveals two potential reasons for ineffective diffusion. First, hospital systems can create barriers to implementing innovation. The dissonant nature of sharing information while protecting information has created structures and systems such as closed networks without wireless access that can impede the flow of information. Second, we do not think our audience is unique in its timid embrace of an innovation. In fact, we argue that for some tasks, technology may not solve the problems of inefficient processes. Moreover, innovations do not have to be a new technology; we can design better paper forms and improve processes for delivery and dissemination. Our article is intended to demonstrate how to diffuse an innovation, not how to design one. Additionally, future research is needed to determine whether the feedback format impacts quality of feedback received.

Analysis was limited by the site-level choice to adopt or not to adopt the innovation, regardless of random assignment. Individual-level results were also limited by the small sample of participants who completed both usability surveys; this small sample represented only seven of the 15 clerkship sites. Along with the self-selection bias that must be considered in the context of all voluntary surveys, two site factors may have introduced bias by limiting participation in the survey. First, we relied on the program director and clerkship site coordinator to disseminate the email inviting participation in the survey. We did not have a mechanism to verify that all potential participants received the invitation. Second, hospital information system security measures prevented some sites from accessing the online survey from all clinical computers, which forced participants to find another computer to complete the survey. We expect that this also decreased participation. In spite of these limitations, the innovation was successfully implemented at several sites, demonstrating the feasibility of a QR code-linked feedback system as an acceptable form of gathering feedback during medical student clerkships.

Acknowledgments

This study was conducted within the Military Primary Care Research Network. We acknowledge the 15 site coordinators, including Jeff Schievenin, Caitlyn Rerucha, Richard Gray, David Klein, and John Vogel, who continually strive to improve learning for our medical students.

Previous presentations: This project was presented at the 2017 annual meeting of the Uniformed Services Academy of Family Physicians and at the 2017 annual meeting of the Society of Teachers of Family Medicine.

Disclaimers: The opinions and assertions contained herein are the private views of the authors and are not to be construed as official or as reflecting the views of the US Air Force, US Army, the Uniformed Services University of the Health Sciences, or the Department of Defense at large.

References

- Srinivasan M, Hauer KE, Der-Martirosian C, Wilkes M, Gesundheit N. Does feedback matter? Practice-based learning for medical students after a multi-institutional clinical performance examination. Med Educ. 2007;41(9):857-865.

doi: 10.1111/j.1365-2923.2007.02818.x.

- Ende J. Feedback in clinical medical education. JAMA. 1983;250(6):777-781.

doi: 10.1001/jama.1983.03340060055026.

- Elnicki DM, Zalenski D. Integrating medical students’ goals, self-assessment and preceptor feedback in an ambulatory clerkship. Teach Learn Med. 2013;25(4):285-291.

doi: 10.1080/10401334.2013.827971.

- Greenberg LW. Medical students’ perceptions of feedback in a busy ambulatory setting: a descriptive study using a clinical encounter card. South Med J. 2004;97(12):1174-1178.

doi: 10.1097/01.SMJ.0000136228.20193.01.

- Richards ML, Paukert JL, Downing SM, Bordage G. Reliability and usefulness of clinical encounter cards for a third-year surgical clerkship. J Surg Res. 2007;140(1):139-148.

doi: 10.1016/j.jss.2006.11.002.

- Kogan JR, Shea JA. Implementing feedback cards in core clerkships. Med Educ. 2008;42(11):1071-1079.

doi: 10.1111/j.1365-2923.2008.03158.x.

- Haghani F, Hatef Khorami M, Fakhari M. Effects of structured written feedback by cards on medical students’ performance at Mini Clinical Evaluation Exercise (Mini-CEX) in an outpatient clinic. J Adv Med Educ Prof. 2016;4(3):135-140.

- Bandiera G, Lendrum D. Daily encounter cards facilitate competency-based feedback while leniency bias persists. CJEM. 2008;10(1):44-50.

doi: 10.1017/S1481803500010009.

- Bennett AJ, Goldenhar LM, Stanford K. Utilization of a formative evaluation card in a psychiatry clerkship. Acad Psychiatry. 2006;30(4):319-324.

- Ferenchick GS, Solomon D, Foreback J, et al. Mobile technology for the facilitation of direct observation and assessment of student performance. Teach Learn Med. 2013;25(4):292-299.

doi:/10.1080/10401334.2013.827972.

- Tews MC, Treat RW, Nanes M. Increasing completion rate of an M4 emergency medicine student end-of-shift evaluation using a mobile electronic platform and real-time completion. West J Emerg Med. 2016;17(4):478-483.

doi: 10.5811/westjem.2016.5.29384.

- Mooney JS, Cappelli T, Byrne-Davis L, Lumsden CJ. How we developed eForms: an electronic form and data capture tool to support assessment in mobile medical education. Med Teach. 2014;36(12):1032-1037.

doi: 10.3109/0142159X.2014.907490.

- Stone A. Online assessment: what influences students to engage with feedback? Clin Teach. 2014;11(4):284-289.

doi: 10.1111/tct.12158.

- Reynolds K, Barnhill D, Sias J, Young A, Polite FG. Use of the QR Reader to Provide Real-Time Evaluation of Residents’ Skills Following Surgical Procedures. J Grad Med Educ. 2014;6(4):738-741.

doi: 10.4300/JGME-D-13-00349.1.

- Sepdham D, Julka M, Hofmann L, Dobbie A. Using the RIME model for learner assessment and feedback. Fam Med. 2007;39(3):161-163.

- Usability.gov. System Usability Scale. http://www.usability.gov/how-to-and-tools/methods/system-usability-scale.html. Accessed March 13, 2015.

- Rogers EM. Diffusion of Innovations. 5th ed. New York: Free Press; 2003.

- Phillips RL Jr, Bazemore AW, DeVoe JE, et al. A family medicine health technology strategy for achieving the triple aim for US health care. Fam Med. 2015;47(8):628-635.

There are no comments for this article.