Background and Objectives: Current literature on review of applicant social media (SoMe) content for resident recruitment is scarce. With the recent increase in the use of privacy settings, and the cost of the recruitment process, the aim of this study was to describe the practice and outcomes of review of applicant SoMe in resident recruitment and its association with program director or program characteristics.

Methods: This study was part of the 2020 Council of Academic Family Medicine’s Educational Research Alliance (CERA) annual survey of family medicine residency program directors (PDs) in the United States.

Results: The overall response rate for the survey was 39.8% (249/626). About 40% of PDs reported reviewing applicant SoMe content. The majority (88.9%) of programs did not inform applicants of their SoMe review practices. The most common findings of SoMe review were that the content raised no concerns (38/94; 40.4%) or was consistent with the application material (34/94; 36.2%). Forty PDs (17.0%) have ever moved an applicant up or down the rank list based on SoMe review. Review of applicant SoMe was not statistically associated with program size, program type, PD age, PD SoMe use, or program SoMe use.

Conclusions: SoMe review has not become routine practice in family medicine resident recruitment. The outcome of SoMe review was mostly consistent with the applicant profile without any concerns and only very few changed the ranking order. This calls for more studies to explore the value of SoMe review for resident selection regarding its effect on future performance.

Resident recruitment is a complex, stressful, and expensive process. In 2020, a family medicine residency program processed over 1,100 applications on average through the National Residency Matching Program (NRMP) for an average of only eight first-year resident positions.1 In 2018, program directors (PDs) estimated spending an average of around $25,000 on recruitment, not including faculty, resident, and staff time, but individual programs reported up to $190,000 in direct recruitment expenditures.2 PDs consistently identify perceived commitment to family medicine and evidence of professionalism and ethics as two most important factors in the selection of applicants to interview and ranking the applicants.1 As perceptions of personal characteristics play such a crucial role in this high-stakes process, programs could be expected to seek all available information about applicants. Similarly, applicants could be expected to use all opportunities to portray themselves in ways that could enhance their selection for interview and ranking by programs. Social media (SoMe) content offers unique insights into the professional and personal characteristics of individuals, and potentially provides information that may not be apparent in the formal application materials, personal communications, or even during interviews.4

SoMe use is almost universal among residency applicants. At least 99% of US medical students use SoMe for educational purposes and/or personal and professional networking.5,11 Review of applicant SoMe content has become integral to recruitment in the business world but the extent of its current use in resident recruitment is difficult to assess.4-7 A 2019 review reported that 12% to 38% of plastic surgery PDs frequently screen the SoMe profiles of applicants. The higher rates were reported from more recent surveys, suggesting the practice is increasing.6,7 The seven surveys of SoMe use in resident recruitment identified in literature reviews cover several specialties but do not include any in family medicine.6,7

A major focus of applicant SoMe review by residency programs is detection of unprofessional conduct.6,9,10,18-22 At least 60% of medical schools report encountering serious SoMe content generated by students, including profane, discriminatory, or sexually explicit material; depictions of intoxication or illegal drug use; or disclosure of patient information.14 Reports from orthopedic surgery and otolaryngology programs identified unprofessional content in the SoMe of 11% to 16% of applicants.19,20 Moreover, 22%-35% of medical students self-report inappropriate use.12,13 However, what is considered unprofessional or inappropriate is time and culture bound.12 With the lack of a clear definition of unprofessional behaviors, challenges have also been raised about subjective judgments of inappropriate professional content in SoMe, and the potentially devastating impact to an applicant of innocuous content being labelled as offensive or inappropriate.5,8 Applicant SoMe review is an informal review and consequently may be biased as it does not follow clear guidelines of what is considered unprofessional. Programs may avoid or be more cautious about assessing applicant SoMe following the adverse publicity and forced retraction of a study describing the SoMe of vascular surgery trainees.23-25

Given the importance of the topic, the current controversies, and the lack of information on SoMe use in family medicine residency programs, we surveyed family medicine residency PDs regarding review of applicant SoMe in resident recruitment. Areas of interest included the prevalence of applicant SoMe review overall and by selected program characteristics, how and when such reviews are conducted, the prevalence of identified inappropriate SoMe content, and the influence of review findings on applicant ranking by programs.

This survey included 10 questions that were part of a larger omnibus survey conducted by the Council of Academic Family Medicine Educational Research Alliance (CERA). The methodology of the CERA Program Director Survey has previously been described in detail.26 The CERA Steering Committee evaluated questions for consistency with the overall subproject aim, readability, and existing evidence of reliability and validity. Pilot testing was done on family medicine educators who were not part of the target population. Questions were modified following pilot testing for flow, timing, and readability. The American Academy of Family Physicians Institutional Review Board approved the project in April 2020. Data were collected from May 11, 2020 to June 2, 2020.

The sampling frame for the survey was all program directors of Accreditation Council for Graduate Medical Education-accredited family medicine residencies in the United States. Email invitations to participate were delivered by CERA using the online program SurveyMonkey. Two follow-up emails to encourage nonrespondents to participate were sent weekly after the initial email invitation and a final reminder was sent 2 days before the survey closed, for a total of four requests to participate. The survey contained a qualifying question to remove programs that had not graduated three resident classes. Of the 698 program directors invited to participate in the survey, 34 indicated that they did not meet criteria of having graduated three residency classes. These responses were removed from the sample, providing a final population size of 626.

We used descriptive analyses (percentages) to describe participants, such as program size (small, medium, and large), program location (Northeast, Midwest, West Coast, etc.), and type (university based, community based, etc) and practice of examination of applicant SoMe. We used χ2 analyses to examine the association between the practice of examination of SoMe with the various PD and program characteristics. Respondents who indicated three, four, five, or six SoMe accounts for themselves or their programs were collapsed into one variable “three or more,” as well as frequency of use collapsed from “once a week” and “less than once a week” to “once a week or less.”

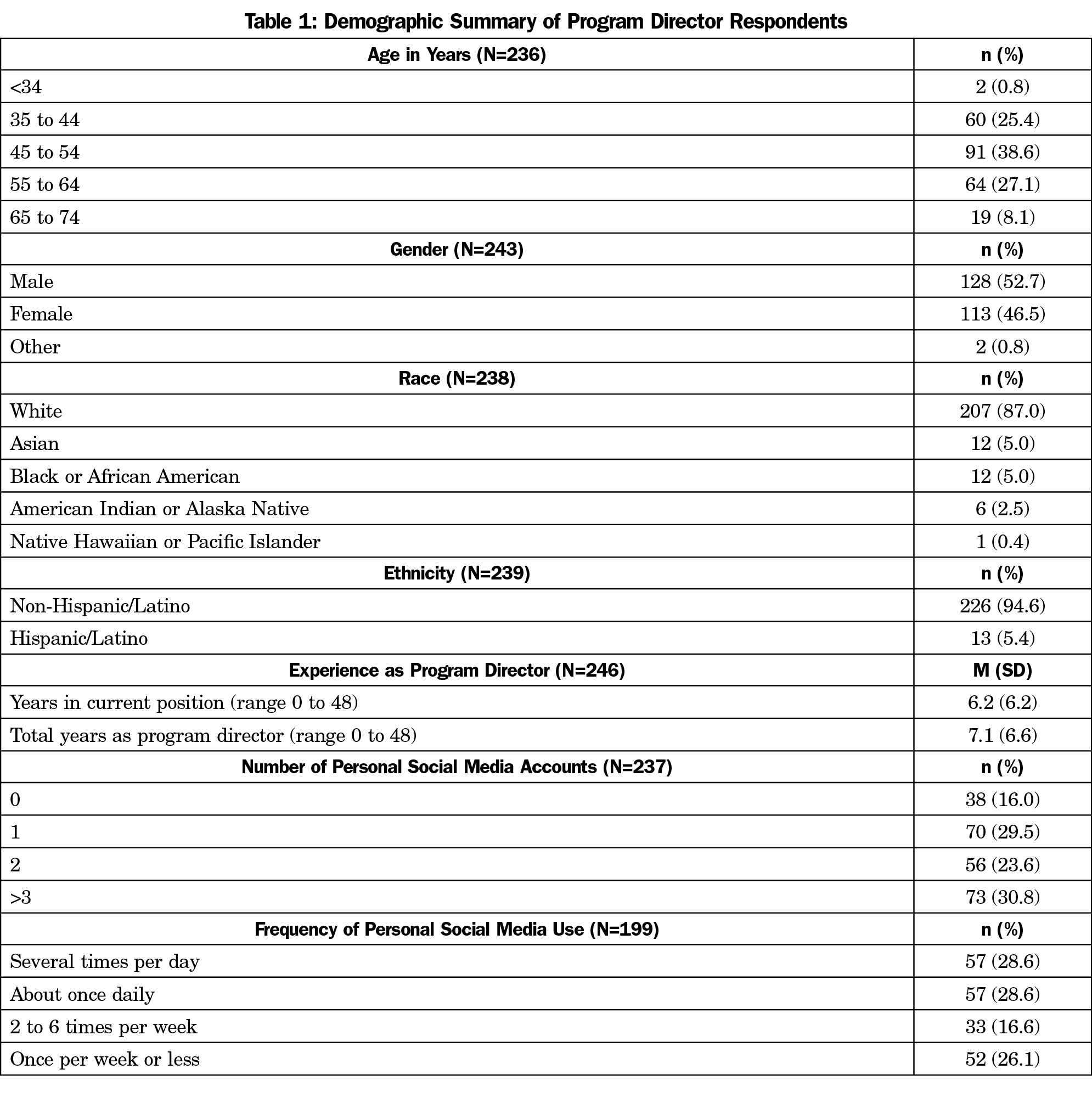

Program Directors (Table 1)

The overall response rate for the survey was 39.8% (249/626). The majority of respondents were non-Hispanic/Latino (226/239, 94.6%); 207 (87.0%) were White; slightly over half were male (128/243, 52.7%); and 38.6% (91/236) were between the ages of 45 and 54 years. Respondents reported a range of less than 2 months to 48 years of experience as a program director, with a mean of 7.1 (±6.6) years. Table 1 shows all PD demographics.

The majority of PDs reported at least one personal SoMe account (199/237, 84.0%), with about one-third having three or more personal accounts (73/237, 30.8%). More than half of PDs with personal SoMe accounts (114/199, 57.3%) reported using SoMe at least once per day. The number of personal SoMe accounts and the frequency of SoMe use was not associated with the age, gender, ethnicity, or race of the program director. Among the PDs who have personal SoMe accounts, there was a positive association between the number of personal accounts and their frequency of use with 50.9% of those who have three or more accounts using SoMe several times per day, as compared to 24.6% of those who have one or two accounts (χ2 [24]=55.9, P<.0001).

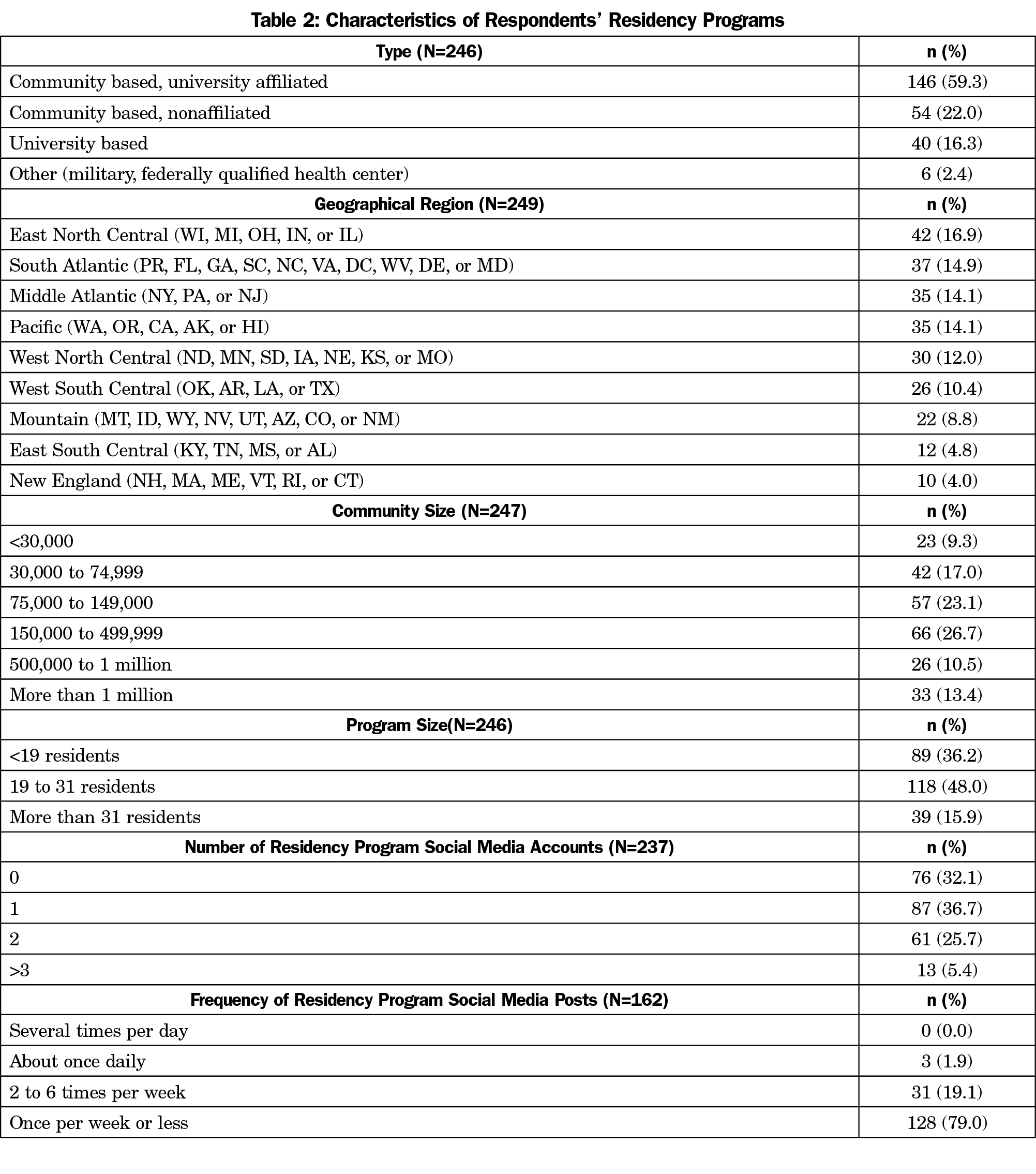

Residency Programs (Table 2)

The programs surveyed were generally representative of programs nationwide, but university-based programs were overrepresented (16.7%, compared to 8.3% nationally27; χ2 [1]=12.4, P=.0004, 95% CI 3.3% to 21.4%). All regions were represented, with the largest proportion of responses from the East North Central region (42/239, 17.6%). Table 2 displays all residency program demographics.

Two-thirds of residency programs (161/239, 67.4%) reported having SoMe accounts. Slightly over one-third of programs (87/239, 36.4%) reported one SoMe account, whereas only 13 reported three or more (5.4%). Nearly 79% of programs (128/162) utilized SoMe once per week or less frequently. Only three of the 162 programs (1.9%) reported daily use. There was no association between the program number of SoMe accounts or frequency of use with the PDs number of personal accounts or use of SoMe. Residency program size was positively associated with the number of program SoMe accounts (χ2 [8]=15.7, P=.05), but not with frequency of postage on SoMe (χ2 [6]=4.7, P=.58).

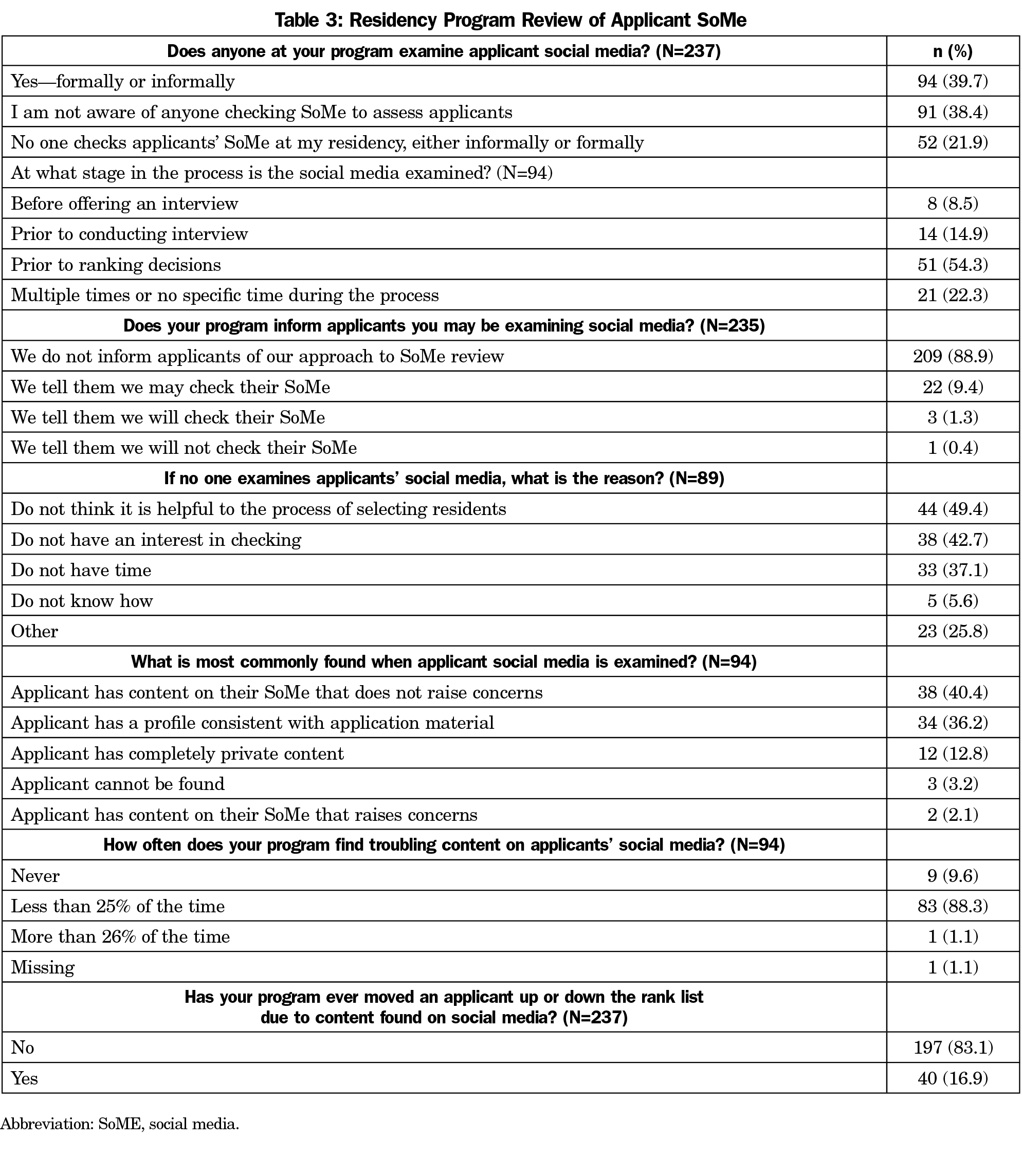

Program Review of Applicant SoMe (Table 3)

Ninety-four PDs (94/237, 39.7%) reported formal or informal examination of applicant SoMe content. Ninety-one PDs (38.4%) were not aware if anyone in their program conducted an applicant SoMe examination. Of programs providing a reason for not reviewing applicant SoMe, nearly half (44/89, 49.4%) did not perceive the review helpful, and 42.7% (38/89) had no interest in the review. Review of applicant SoMe was not statistically associated with program size, program type, PD age, PD SoMe use, or program SoMe use.

In programs that examined applicant SoMe content, just over half (51/94, 54.3%) conducted the review prior to making their ranking decisions, but only eight programs (8.5%) reviewed applicant SoMe content before offering an interview. The vast majority (209/235, 88.9%) of programs did not inform applicants of their SoMe review practices. Three programs informed applicants that SoMe would be reviewed while an additional 22 indicated that a review was possible. Only one program assured applicants that SoMe would not be reviewed. Fifteen programs (15/94, 15.9%) that attempted review reported that applicant SoMe content frequently could not be located or accessed. For applicants whose SoMe could be reviewed, the most common findings were that the content raised no concerns (38/94, 40.4%) or was consistent with the application material (34/94, 36.2%). Only one respondent (1.1%) estimated finding troubling content in the SoMe of more than 25% of applicants. However, 40 PDs (40/237, 16.9%) indicated that they had moved an applicant up or down their rank list due to information found on applicant SoMe. Programs that either formally or informally review applicant SoMe were more likely to move an applicant on their rank due to information found on SoMe (χ2 [3]=56.6, P<.0001). Thirty-five out of the 40 the PDs who had moved applicants on their program rank list had a formal or informal process for reviewing applicant SoMe, while four of the 40 PDs indicated their programs had no formal or informal review of applicant SoMe, and one indicated their program did not check applicant SoMe at all.

Residency programs use SoME to increase program visibility and for screening of residency applicants. Two systematic reviews have shown that there are scarce studies that address SoMe and resident recruitment.28,29 This CERA survey aimed to fill a gap in understanding the frequency of applicant SoMe review during resident recruitment and applicant SoMe review outcomes among family medicine residency programs. About 40% of PDs reported reviewing applicant SoMe content. This is in line with the higher range of earlier reports from other specialties,6-10 but suggests SoMe review has not become routine practice in family medicine resident recruitment. The finding that an almost equal number of PDs were unaware of any applicant SoMe review suggests low priority and lack of interest by many PDs. This is reinforced by the fact that most of the content found did not raise any concern or was consistent with the applicant material. Nevertheless, 16.9% of the programs have moved at least one applicant up or down the rank list due to the content found on SoMe. SoMe review is more likely to negatively impact than positively enhance an applicant’s status. In a 2011 national survey of 22 specialties, 38% of PDs reported lowering, but only 6% recalled ever raising, applicant rankings following SoMe content review.9 In a 2011 survey, 33% of general surgery PDs reported lowering the ranking of an applicant, or removing them from consideration entirely, because of SoMe content.10 When repeated in 2015, only 11% reported these negative outcomes.18 It may seem that residency programs aim to use applicant SoMe review to exclude residents.

Although evidence of professionalism and ethics is one of the most important aspects of recruitment,30 it was infrequently used as compared to academic achievements. Furthermore, the effect of SoMe content review on future performance and ethical conduct is unknown. The ability of the various residency selection criteria to predict performance has been studied in certain specialties; however, the majority relied on objective measures of academic performance in terms of grades or rank list.31-33 A more structured assessment using professionalism minievaluation exercise score was able to predict attitude and global evaluation of first-year pediatric residents. In view of the current extensive time commitment of faculty and program directors devoted to the interviews and lack of evidence on future performance,2 it is debatable whether the extra time needed to review the applicant SoMe is worth the effort. However, the forced shift to a virtual residency interview format during the 2020-2021 recruiting season may lead to more reliance on virtual interviews in the future, which could increase the use of SoMe reviews by programs as they free up resources previously dedicated to facilitating in-person interviews.

Programs tended to use applicant SoMe review late in the recruitment process. The most common timing was prior to making rank list decisions, suggesting it was used as a confirmatory check on assessments made from the application materials and interview rather than a screening process in selecting applicants for serious consideration. Moreover, the majority of the programs did not inform the applicants about the possibility of SoMe review, raising concerns about the transparency of the recruitment process. Residents and medical students are becoming more aware of their SoMe content and its effect on recruitment.15 A 2013 study reported that 60% had altered, or intended to alter, their SoMe pages before applying to residency and 36% already had privacy protected profiles.5 By 2016, studies reported over 90% of medical students using privacy settings to shield content.16,17 In our study, 13.5% of the program directors reported finding completely private content by the applicants. This suggests that applicants who do not protect their profiles are comfortable with content being publicly viewed by medical school and residency faculty. However, it does not address if content was significantly edited or curated to appeal to potential reviewers. The recent debates about perceptions of inappropriate content and the judgments made about applicants based on SoMe content could lead to programs avoiding SoMe review. Conversely, applicants could edit, or privacy protect, SoMe content to the extent that review provides no useful information for programs.

Limitations of the study include possible bias from participants and lack of generalizability due to the fact that 60% of family medicine program directors did not respond to the survey, possibly due to the increased demands on time during the COVID-19 pandemic or a lack of interest in the topic. Another pandemic-related limitation may be the cancellation of in-person interviews during the study period. This could have caused increased interest in obtaining information about applicants through SoMe at the time of the study, but not all programs may continue the practice when in-person interviewing resumes. The survey questionnaire was limited in the amount of detail that could be collected; hence, we were unable to elicit important items such as the definitions and prevalence of different forms of troubling content, the precise impact of SoMe review on ranking decisions or whether the residents’ application, interview or informal review have triggered the applicant SoMe review. It was also impossible to evaluate potential competing influences on PD time that limits their personal SoMe use, such as nonwork duties relating to family. Future quantitative and qualitative studies are needed. All studies concerning SoMe have time-limited generalizability due to rapid social and technological changes in its use. The dynamic landscape of SoMe provides constantly changing availability and popularity of formats and a plethora of opportunities for their use.

About 40% of programs included in the CERA study reported reviewing the SoMe of applicants for residency positions. Applicants were seldom informed of screening practices. Most programs conducted the review prior to making rank list decisions, suggesting it was used to validate rather than screen applicants. Interestingly, the outcome of the SoMe review was mostly consistent with the applicant profile without any concerns and only very few changed the ranking order. Consequently, it is time to look for additional value of applicant SoMe review, taking into consideration that it is a tedious process and biased by the subjectivity of what is an inappropriate behavior. This calls for more studies to examine the value of SoMe review for resident selection.

Acknowledgments

The authors thank the faculty and staff who support the CERA program.

References

- National Resident Matching Program, Data Release and Research Committee. Results of the 2020 NRMP Program Director Survey. Washington, DC: National Resident Matching Program; 2020. Accessed December, 2020. https://mk0nrmp3oyqui6wqfm.kinstacdn.com/wp-content/uploads/2020/08/2020-PD-Survey.pdf

- Nilsen K, Callaway P, Phillips JP, Walling A. How much do family medicine residency programs spend on resident recruitment? A CERA study. Fam Med. 2019;51(5):405-412. doi:10.22454/FamMed.2019.663971

- National Resident Matching Program, Data Release and Research Committee. Results of the 2019 NRMP Applicant Survey by Preferred Specialty and Applicant Type. Washington, DC: National Resident Matching Program; 2019. Accessed December, 2020. https://mk0nrmp3oyqui6wqfm.kinstacdn.com/wp-content/uploads/2019/06/Applicant-Survey-Report-2019.pdf

- Wells DM. When faced with Facebook: what role should social media play in selecting residents? J Grad Med Educ. 2015;7(1):14-15. doi:10.4300/JGME-D-14-00363.1

- Strausburg MB, Djuricich AM, Carlos WG, Bosslet GT. The influence of the residency application process on the online social networking behavior of medical students: a single institutional study. Acad Med. 2013;88(11):1707-1712. doi:10.1097/ACM.0b013e3182a7f36b

- Sterling M, Leung P, Wright D, Bishop TF. The use of SoMe in graduate education: A systematic review. Acad Med. 2017;92(7):1043-1056. doi:10.1097/ACM.0000000000001617

- Economides JM, Choi YK, Fan KL, Kanuri AP, Song DH. Are We Witnessing a Paradigm Shift?: A Systematic Review of Social Media in Residency. Plast Reconstr Surg Glob Open. 2019;7(8):e2288. doi:10.1097/GOX.0000000000002288

- Khadpe J, Singh M, Repanshek Z, et al. Barriers to utilizing social media platforms in emergency medicine residency programs. Cureus. 2019;11(10):e5856. doi:10.7759/cureus.5856

- Go PH, Klaassen Z, Chamberlain RS. Residency selection: do the perceptions of US programme directors and applicants match? Med Educ. 2012;46(5):491-500. doi:10.1111/j.1365-2923.2012.04257.x

- Go PH, Klaassen Z, Chamberlain RS. Attitudes and practices of surgery residency program directors toward the use of social networking profiles to select residency candidates: a nationwide survey analysis. J Surg Educ. 2012;69(3):292-300. doi:10.1016/j.jsurg.2011.11.008

- Ruter D, Shirley LA, Jones C. Medical Student Utliization of Social Media. 2018. Acad Surg Congress Abst Archive. Accessed June 28, 2021. https://www.asc-abstracts.org/abs2018/94-01-medical-student-utilization-of-social-media/.

- Barlow CJ, Morrison S, Stephens HO, Jenkins E, Bailey MJ, Pilcher D. Unprofessional behaviour on social media by medical students. Med J Aust. 2015;203(11):439. doi:10.5694/mja15.00272

- Kitsis EA, Milan FB, Cohen HW, et al. Who’s misbehaving? Perceptions of unprofessional social media use by medical students and faculty. BMC Med Educ. 2016;16(1):67. doi:10.1186/s12909-016-0572-x

- Chretien KC, Greysen SR, Chretien JP, Kind T. Online posting of unprofessional content by medical students. JAMA. 2009;302(12):1309-1315. doi:10.1001/jama.2009.1387

- George DR, Green MJ, Navarro AM, Stazyk KK, Clark MA. Medical student views on the use of Facebook profile screening by residency admissions committees. Postgrad Med J. 2014;90(1063):251-253. doi:10.1136/postgradmedj-2013-132336

- Osman A, Wardle A, Caesar R. Online professionalism and Facebook—falling through the generation gap. Med Teach. 2012;34(8):e549-e556. doi:10.3109/0142159X.2012.668624

- Walton JM, White J, Ross S. What’s on YOUR Facebook profile? Evaluation of an educational intervention to promote appropriate use of privacy settings by medical students on social networking sites. Med Educ Online. 2015;20(1):28708. doi:10.3402/meo.v20.28708

- Langenfeld SJ, Vargo DJ, Schenarts PJ. Balancing privacy and professionalism: A survey of general surgery program directors on SoMe and surgical education. J Surg Educ. 2016;73(6):e28-e32. doi:10.1016/j.jsurg.2016.07.010

- Ponce BA, Determann JR, Boohaker HA, Sheppard E, McGwin G Jr, Theiss S. Social networking profiles and professionalism issues in residency applicants: an original study-cohort study. J Surg Educ. 2013;70(4):502-507. doi:10.1016/j.jsurg.2013.02.005

- Golden JB, Sweeny L, Bush B, Carroll WR. Social networking and professionalism in otolaryngology residency applicants. Laryngoscope. 2012;122(7):1493-1496. doi:10.1002/lary.23388

- Pander T, Pinilla S, Dimitrtadis K, Fischer MR. The use of Facebook in medical education- a literature review. GMS Z Med Ausbild 2014;31(3)Doc 33.

- Schulman CI, Kuchkarian FM, Withum KF, Boecker FS, Graygo JM. Influence of social networking websites on medical school and residency selection process. Postgrad Med J. 2013;89(1049):126-130. doi:10.1136/postgradmedj-2012-131283

- Hardouin S, Cheng TW, Mitchell EL, et al. Prevalence of unprofessional SoMe content among young vascular surgeons. J Vasc Surg. 2020;72(2):667-671. doi:10.1016/j.jvs.2019.10.069

- Goldberg E. Women doctors ask: who gets to decide what’s ‘professional’? The New York Times. August 2, 2020. Accessed June 28, 2021. https://www.nytimes.com/2020/08/02/us/women-doctors-medbikini-professional-gender-bias.html

- Cox CE. #MedBikini vs JVS: Paper Spurs Debate Over Sexism, SoMe in Medicine. tctMD. July 30, 2020. Accessed June 28, 2021. https://www.tctmd.com/news/medbikini-vs-jvs-paper-spurs-debate-over-sexism-social-media-medicine

- Seehusen DA, Mainous AG III, Chessman AW. Creating a centralized infrastructure to facilitate medical education research. Ann Fam Med. 2018;16(3):257-260. doi:10.1370/afm.2228

- Residency Directory. American Academy of Family Physicians. Accessed June 28, 2021. https://www.aafp.org/medical-education/directory/residency/search

- Economides JM, Choi YK, Fan KL, Kanuri AP, Song DH. Are we witnessing a paradigm shift?: A systematic review of social media in residency. Plast Reconstr Surg Glob Open. 2019;7(8):e2288. doi:10.1097/GOX.0000000000002288

- Sterling M, Leung P, Wright D, Bishop TF. The use of social media in graduate medical education: a systematic review. Acad Med. 2017;92(7):1043-1056. doi:10.1097/ACM.0000000000001617

- Hartman ND, Lefebvre CW, Manthey DE. A narrative review of the evidence supporting factors used by residency program directors to select applicants for interviews. J Grad Med Educ. 2019;11(3):268-273. doi:10.4300/JGME-D-18-00979.3

- Raman T, Alrabaa RG, Sood A, Maloof P, Benevenia J, Berberian W. Does residency selection criteria predict performance in orthopaedic surgery residency? Clin Orthop Relat Res. 2016;474(4):908-914. doi:10.1007/s11999-015-4317-7

- Wagner JG, Schneberk T, Zobrist M, et al. What predicts performance? A multicenter study examining the association between resident performance, rank list position, and United States Medical Licensing Examination Step 1 scores. J Emerg Med. 2017;52(3):332-340. doi:10.1016/j.jemermed.2016.11.008

- Zuckerman SL, Kelly PD, Dewan MC, et al. Predicting resident performance from preresidency factors: a systematic review and applicability to neurosurgical training. World Neurosurg. 2018;110:475-484.e10. doi:10.1016/j.wneu.2017.11.078

There are no comments for this article.