Background and Objectives: Virtual interviews (VI) for residency programs present a relatively new paradigm for recruitment. To date, studies have been small, largely descriptive, and focused on surgical and subspecialty areas. The purpose of the study was to assess residents’ perceptions about their VI experience and to compare those in primary care versus non-primary care specialties.

Methods: An electronic survey was sent to 35 designated institutional officials in Illinois with a resulting snowball sample to assess first-year residents’ perceptions of their virtual interviewing experience. A total of 82 postgraduate year-1 residents responded to the survey. We used descriptive analysis and χ2 tests to analyze results.

Results: Respondents were mostly female (52.4%), White (79%), non-Hispanic (76%), attending a university residency program (76.3%), and in a primary care specialty (61.7%). In general, most respondents (54.8%-75.3%) felt their VI accurately portrayed their residency program experience. Resident morale, resident-faculty camaraderie, and educational opportunities were perceived as being best portrayed in the VI. Compared to non-primary care residents, primary care residents felt that their program’s VI more accurately portrayed the patient population served (P=.0184), resident morale in the program (P=.0038), and the overall residency experience (P=.0102). Still, 25.7% of respondents felt they were not accurately represented in the VI.

Conclusions: Respondents reported that the VI portrays the residency experience fairly well, yet there is opportunity to improve the overall experience. The more difficult experiences to convey (morale, camaraderie, and the overall resident experience) may be areas in which primary care programs are outpacing other training programs.

The COVID-19 pandemic necessitated significant adaptations among graduate medical education (GME) programs, ranging from adjustments in educational approaches to urgent innovations in residency and fellowship recruitment. Health and safety concerns, travel limitations, and quarantine restrictions related to the pandemic resulted in programs pivoting from well-defined, in-person recruitment plans to indeterminate virtual experiences. Prior to the pandemic, numerous programs experimented with virtual interviews (VI) to address student cost and maximize time efficiency for faculty.1-3 At the time, findings of cost reductions and improved faculty efficiency were favorable, but students reported a preference for in-person interviews due to establishment of rapport being more difficult in a virtual setting.

When the Coalition for Physician Accountability released its recommendation that all residency interviews once again be conducted virtually for the 2021-2022 interview cycle, the demand for VI validation grew substantially. Residency programs have collectively struggled to find the best approach to interview virtually in a manner that allows adequate applicant assessment while ensuring accurate representation of their program’s offerings.4 Huppert et al reviewed the limited data on virtual interviews in order to clarify best practices.5 Their recommendations included having a detailed interview plan, using standardized questions, preparation of current residents as hosts for virtual interviews, the development of electronic materials for the program including virtual social events, and the collection and analysis of data regarding each program’s interview process.

These recommendations have been based on limited data due to the recent and rapid institution of VI to GME. Most studies have been limited in scope and performed in surgical or subspecialty venues.5-8 Studies have analyzed various components of the virtual process to identify themes by which the process could more accurately represent programs.9 Evaluations of perception of platform, interactions with residents and faculty, residency site, and overall impressions have shown favorable ratings when individually analyzed. Despite these impressions, thematic analyses and qualitative responses in these studies have shown that a preference for in-person interviews remains. A recent study by Seifi et al evaluating VI perceptions of medical students and residents demonstrated a preference for in-person interviews.10 Common themes for this preference correlate with factors considered to be the driving determinants by which applicants rank programs: applicant fit with the program, resident interactions, and program site and culture, all of which offer insight into the core values of the institution.8,11-14

Comparison studies of surveyed programs have suggested that virtual interviewing may be perceived as unintentionally showing preference toward programs over applicants as programs understand the dynamics of their institutional culture and are able to selectively showcase during a VI without applicants having insight into what is not mentioned. This may have contributed to the finding that 38% of orthopedic fellowship applicants reported that the VI format resulted in them ranking a program lower.7 Concordantly, up to 25% of surveyed surgical residency and fellowship program directors felt virtual interviews negatively impacted their most recent matches.

Following this second consecutive year of virtual recruitment, we studied the perceptions of virtual interviewing among interns postgraduate year 1 (PGY1) who completed VI in 2020-2021. Uniquely, this approach allows a retrospective examination of those who completed a VI and then were able to clarify the accuracy of the VI with the reality of their current program. Moreover, much of the recent data are solely within the surgical specialties and our study considers differences between those in primary care versus non-primary care specialties. Our investigation aimed to compare these perceptions to better understand new approaches to virtual interviewing as we look forward to recruitment seasons in the future.

We performed this exploratory retrospective survey study via electronic questionnaire, utilizing the online survey platform Qualtrics (Qualtrics, LLC, Provo, UT). The institutional review board at the University of Illinois College of Medicine Rockford approved the study.

An email invitation with the questionnaire link was sent to 35 designated institutional officials (DIOs) in the state of Illinois for distribution to their resident physicians if permitted by their institutional policies. DIOs passed this survey to other institutions, some of which were located in other states, resulting in a snowball sample. We calculated an estimated response rate by a manual count of the PGY-1 GME positions at each institution for which there was at least one respondent. We obtained the number of PGY-1 positions for each residency program at the respondent’s institution via a web search of the sponsoring institution. The questionnaire link was active from December 2021 through February 2022 and the original 35 DIOs were sent a reminder email for distribution approximately 2 weeks prior to closure of the study. Participation in the study was voluntary and anonymous.

The questionnaire collected demographic information including age, race, ethnicity, gender identity, marital status, and first-generation college graduate status. Other information collected included location of medical school attended (United States vs international), type of residency program (community vs university/academic), resident specialty (primary care vs non-primary care). We categorized residents enrolled in family medicine, pediatrics, and internal medicine programs as primary care. We categorized all other specialty programs as non-primary care. We also collected information about the number of residency programs to which a resident applied, number of programs that offered an interview, and number of interviews completed. We analyzed all descriptive variables using univariate analysis.

The survey assessed perceptions of the completed VI process for PGY-1 residents. Respondents rated how the following residency characteristics were portrayed to them in a VI setting: resident experience, resident wellness, city/location, resident morale, resident/faculty camaraderie, educational opportunities, program facilities, and patient population. These characteristics were assessed using a Likert scale with response options of strongly agree, somewhat agree, neither agree nor disagree, somewhat disagree, and strongly disagree. We recategorized responses into agree, neither agree nor disagree, and disagree. We stratified data by resident specialty (primary care vs non-primary care) and used χ2 tests to assess differences for each of the residency characteristic variables above. We set significance at P<.05.

Finally, the survey included a section on how applicant and program ranking may have been different in an in-person setting, as well as how accurately the respondent believed they were perceived in a VI setting. Specifically, we asked if the respondents believed that they were accurately perceived, whether the residency program would have ranked them differently following an in-person interview, or if the respondent would have ranked the program differently following an in-person interview.

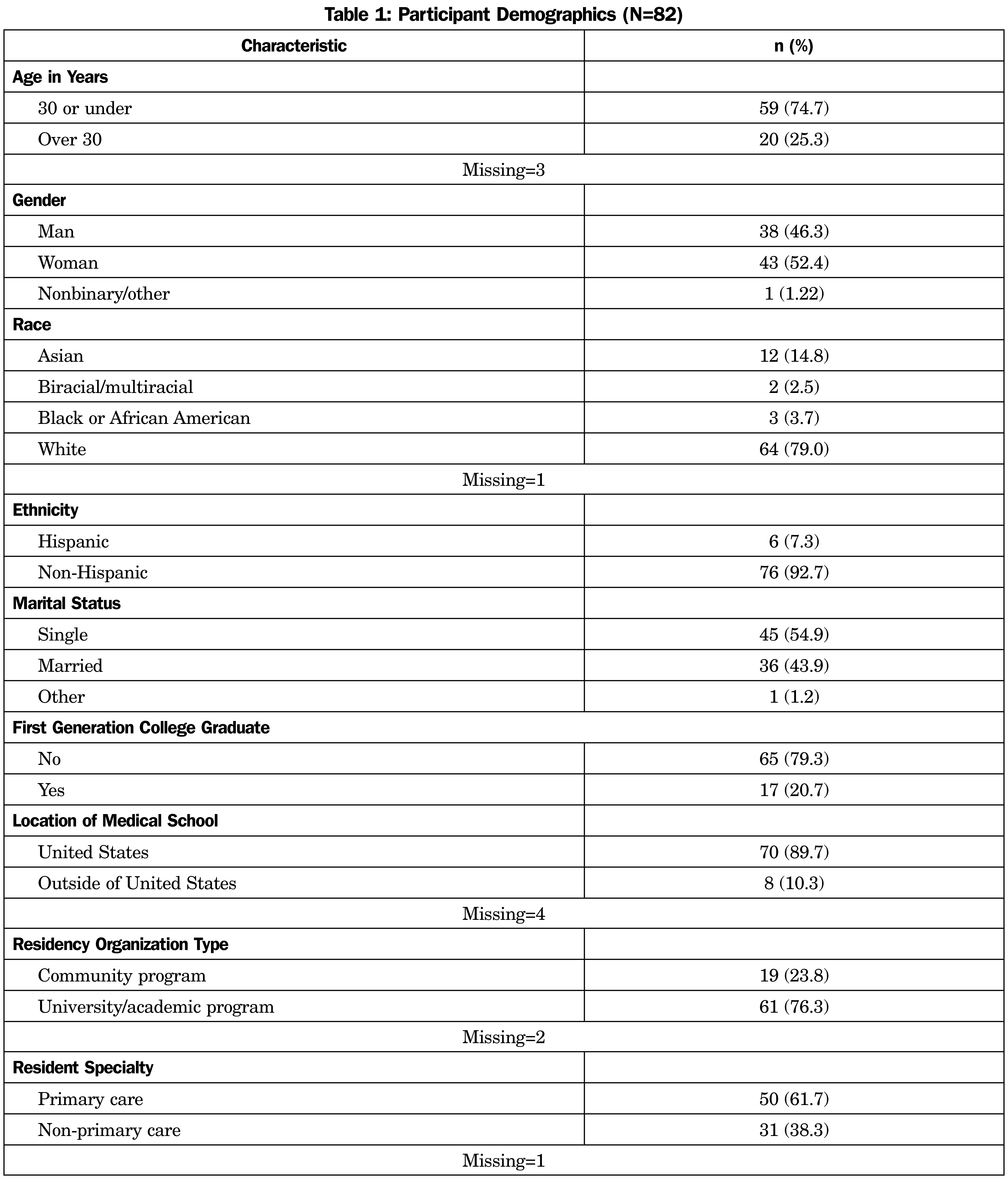

A total of 82 PGY-1 resident respondents from 14 institutions in eight states (IL, IN, NJ, NY, SC, TN, TX, VA) completed this survey out of an estimated pool of 428 PGY-1 residents, for a response rate of approximately 18%. Demographic characteristics of the respondents are shown in Table 1. Respondents were predominantly White (79.0%), non-Hispanic (92.7%), and attended US medical schools (89.7%). Most of the respondents were in a university/academic residency program (76.3%) and were in a primary care residency (61.7%).

Overall, respondents reported that they applied to a mean number of 73.4 programs (range: 1-200), were invited to an average of 16.0 interviews (range: 1-70) and completed a mean of 13.1 interviews (range: 1-36). When stratified by specialty (primary care vs non-primary care) we found that respondents who applied to primary care programs, applied to an average of 77.6 (range: 1-200) programs, received invitations for interviews from a mean of 17.2 (range: 1-70) programs, and completed 13.2 (range: 1-36) interviews on average.

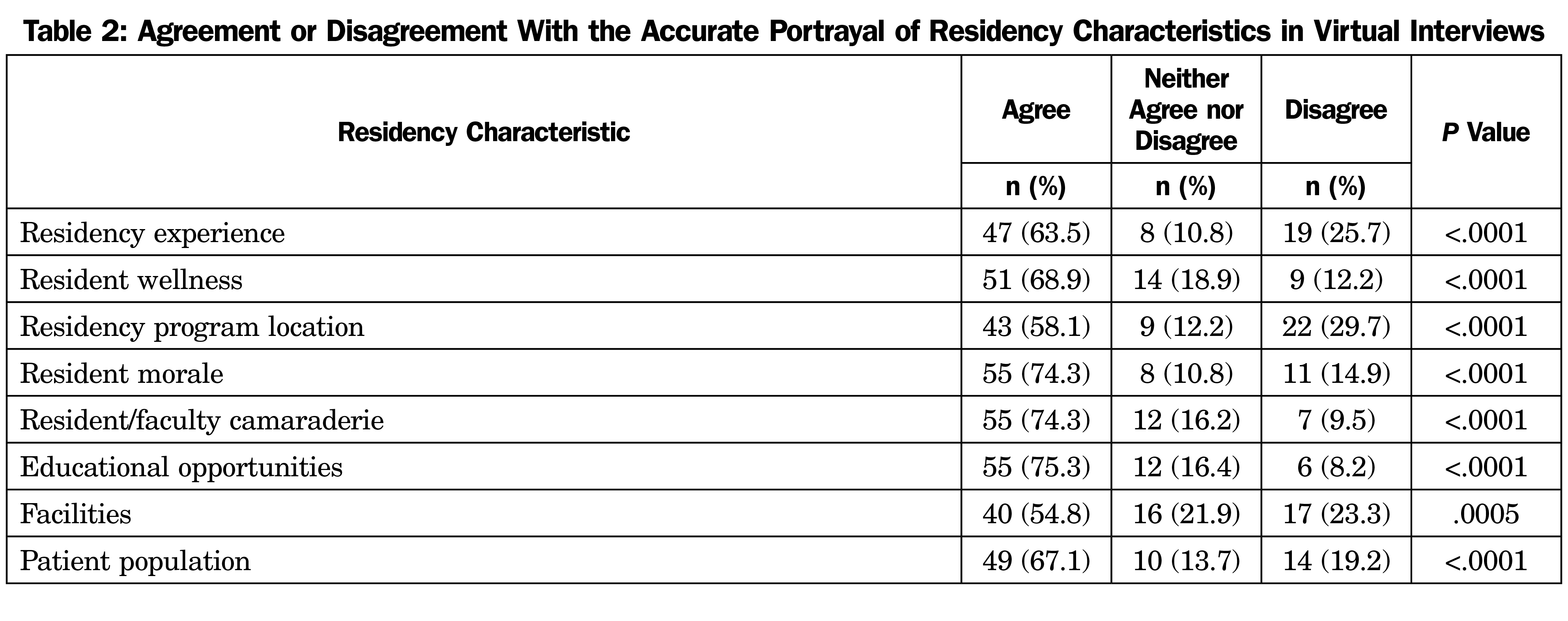

Respondents were asked about how accurately various residency characteristics were portrayed in a virtual interview. As shown in Table 2, most respondents felt that all residency characteristics assessed were accurately portrayed in a VI setting. The differences in the total number of responses across items is due to missing responses.

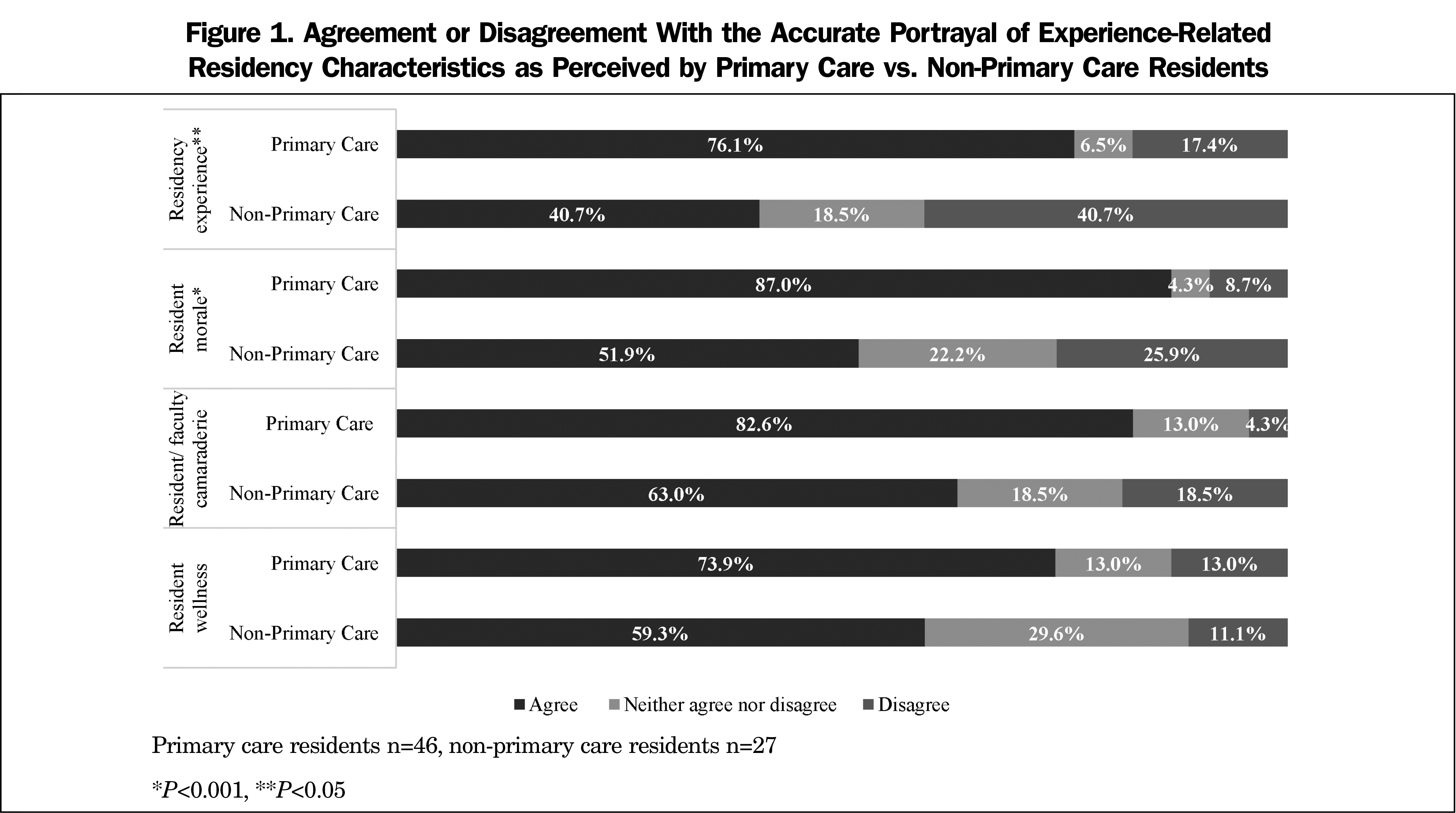

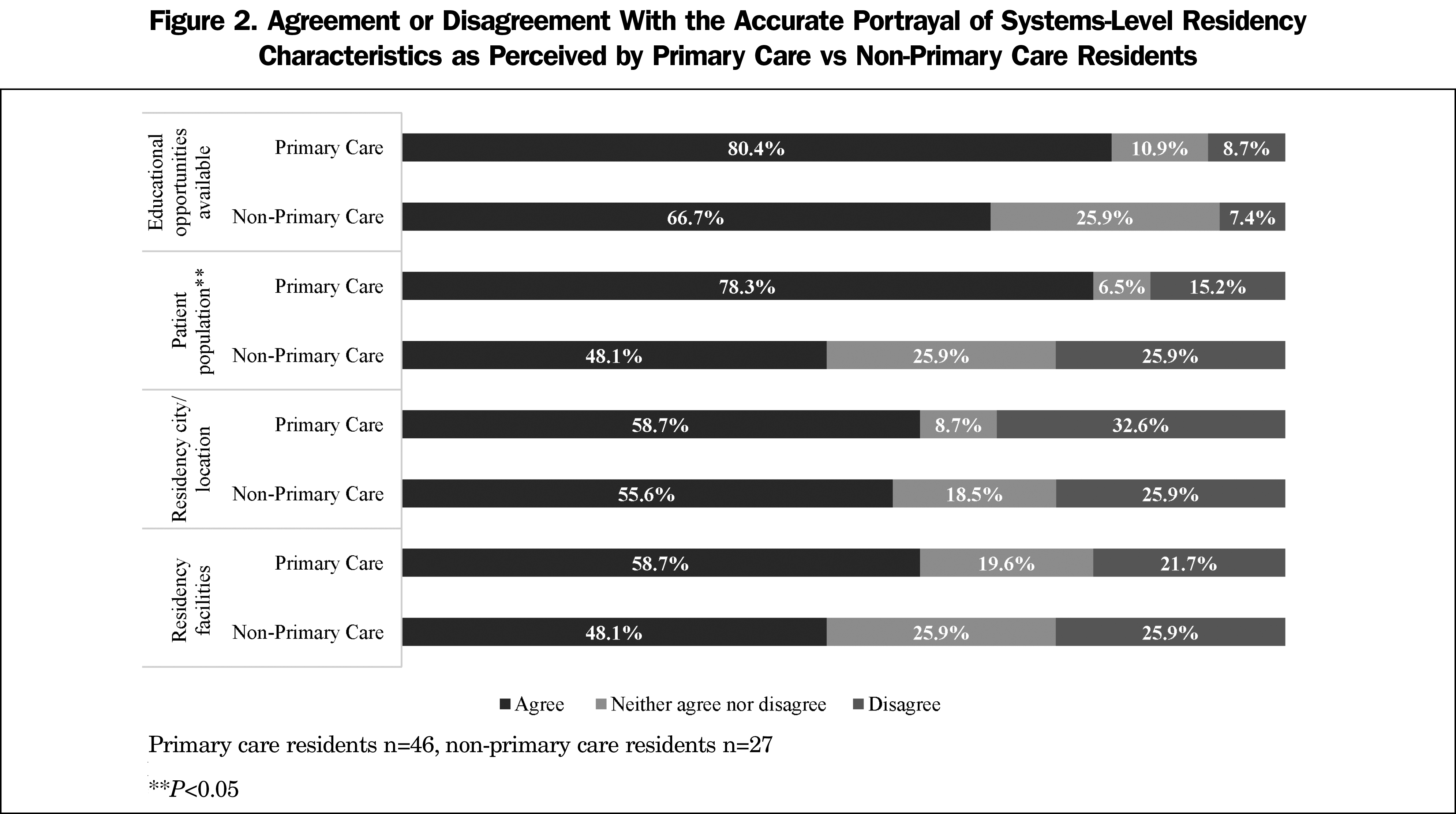

We stratified the residency characteristics from Table 2 by specialty (primary care vs non-primary care). Only three characteristics were significantly different between the two specialty categories. Respondents reported that they felt that the primary care programs to which they matched more accurately depicted their residency experience, resident morale (Figure 1), and patient population (Figure 2) in their virtual interviews.

When asked about how they were perceived in a virtual interviewing setting, over half (58.1%) of all respondents felt they were perceived accurately in a VI setting, while 25.7% felt they were not perceived accurately. Most respondents (72.6%) felt they would have ranked the program the same whether it was an in-person or a virtual interview, while 10.9% felt they would have ranked the program lower in an in-person interview. A similar percentage (72.9%) felt they would have been ranked the same by the residency program whether it was an in-person or a virtual interview, yet 22.9% felt they would have been ranked higher in an in-person interview.

Virtual interviewing is a relatively new paradigm for GME recruitment. Despite the benefits of reduced cost for medical student applicants and time efficiencies for faculty, there remains much to understand about the process of VI. Our study is unique in that we surveyed PGY-1 residents who experienced the VI process in the 2020-2021 recruitment cycle and participated in our survey study after matriculating into their residency program; they could uniquely identify gaps in the VI process. Our study is also unique because we compared perceptions of primary care respondents with non-primary care respondents. The needs for the conveyance of program attributes may be different between these two groups, or perceived as more accurately portrayed in the primary care specialties.

Our study sample comprised respondents who were mostly 30 years of age or younger with slightly more females, predominantly White or Asian, non-Hispanic, and largely attended US medical schools. This largely coincides with the current demographics of physicians in training in the United States.15 The respondents reported applying to an average of 73 GME programs, which is more than the 60.5 programs applied to in 2018.16 Ultimately, respondents reported interviewing at an average of 13 programs in the 2020-2021 interview cycle. These program application and interview numbers are similar for both primary care and non-primary care respondents.

When considering the respondent perceptions of how the VI portrayed the residency experience, resident wellness, resident morale, resident-faculty camaraderie, educational opportunities, and the patient population served, there was a general agreement that these were at least adequately portrayed in the VI. Our findings are in general agreement with others.9,11-12,14 However, 20%-30% of respondents felt that several of these program attributes were not adequately conveyed in the VI, leaving ample opportunity for improvement in this process. Based solely on the percentage of respondents who disagreed with the specific program attributes being adequately portrayed in the VI, it may be that programs should focus on improving how they convey information about the program city/location, residency experience, facilities, and patient population served in the training program. This could be accomplished with additional video presentations, more details about the local patient population, or inclusion of these specific topics during the interview, anticipating applicants’ interest in these areas.

When we stratified the respondents’ perceptions of the VI by either primary care or non-primary care, we found that primary care respondents felt the VI conveyed the resident experience, resident morale, and patient population significantly better than did the non-primary care respondents. Based solely on the rates of disagreement with any of these three program attributes, the primary care programs to which our survey respondents matched are excelling at conveying these specific attributes to potential applicants.

Consistent with a study by Ding et al, we found that just over half of the respondents felt that the VI was a medium in which they were accurately portrayed as a program applicant.13 However, a concerning finding is that one-quarter of the respondents surveyed felt that they were not accurately portrayed in the VI, and nearly 23% felt that they would have been ranked higher if given the opportunity for an in-person interview. Despite the benefits in cost savings and ability to expand the applicant’s search area for residency programs, we still have a lot of work to do to convey equity and opportunity in the new paradigm of VI. Arguments that the VI paradigm improves equity in the GME program selection process may be tempered by the fact that a significant portion of applicants do not feel this medium provides them with the best opportunity to convey their best self.

Limitations

This study has limitations. First, we had to estimate our sample size and response rate. While our survey was distributed to DIOs it was unknown how many individuals received the survey link. Our estimation based upon a count of all PGY-1 positions at an institution from we which we received at least one response suggests a response rate of 18%. Participation bias is another limitation as residents may have felt pressured to respond differently following receipt of surveys from their DIOs. Secondly, our sample size was relatively small and made further subanalyses difficult. This also limited our ability to utilize a 5-point Likert scale due to small cell numbers. While our population was representative of typical medical student demographics, the small sample size limits the generalizability to all residency programs. Lastly, we asked respondents to speculate regarding how they would have responded if their interview were in person, potentially increasing subjectivity of responses to the ranking questions that may have differed with real-life experience.

Despite its limitations, this study provides important information on the perception of the VI experience as it relates to GME selection, and uniquely allowed the respondents to interpret their perception of the programs that interviewed them, and the programs to which they matched. The primary care programs to which our respondents matched did well in conveying the resident experience, resident morale, and population served, but can improve in better depicting the program city/location and facilities available. Further study might investigate these findings and the factors that contribute to them.

Overall, PGY-1 respondents in primary care programs do not appear to be disadvantaged by the VI process. Our GME climate has undergone dramatic change initiated by a pandemic. Respondents reported that VI portrays the residency experience fairly well, yet there is opportunity to improve the overall experience. The more difficult experiences to convey (morale, camaraderie, and the overall resident experience) may be areas in which primary care programs are outpacing other training programs. Future studies should consider the perspectives of those residents who went through in-person interviews and are now performing VI as interviewers. More studies of the VI process in primary care and family medicine specifically are needed.

References

- Shah SK, Arora S, Skipper B, Kalishman S, Timm TC, Smith AY. Randomized evaluation of a web based interview process for urology resident selection. J Urol. 2012;187(4):1380-1384. doi:10.1016/j.juro.2011.11.108

- Melendez MM, Dobryansky M, Alizadeh K. Live online video interviews dramatically improve the plastic surgery residency application process. Plast Reconstr Surg. 2012;130(1):240e-241e. doi:10.1097/PRS.0b013e3182550411

- Edje L, Miller C, Kiefer J, Oram D. Using skype as an alternative for residency selection interviews. J Grad Med Educ. 2013;5(3):503-505. doi:10.4300/JGME-D-12-00152.1

- Frohna JG, Waggoner-Fountain LA, Edwards J, et al; Pediatrics Recruitment Study Team. National pediatric experience with virtual interviews: lessons learned and future recommendations. Pediatrics. 2021;148(4):e2021052904. doi:10.1542/peds.2021-052904

- Huppert LA, Hsiao EC, Cho KC, et al. Virtual interviews at graduate medical education training programs: determining evidence-based best practices. Acad Med. 2021;96(8):1137-1145. doi:10.1097/ACM.0000000000003868

- Yong TM, Davis ME, Coe MP, Perdue AM, Obremskey WT, Gitajn IL. Recommendations on the use of virtual interviews in the orthopedic trauma fellowship match: a survey of applicant and fellowship director perspectives. OTA Inter. 2021;4(2):e130. doi:10.1097/OI9.0000000000000130.

- Huppert LA, Hsu G, Elnachef N, et al. A single center evaluation of applicant experiences in virtual interviews across eight internal medicine subspecialty fellowship programs. Med Educ Online. 2021;26(1):1946237. doi:10.1080/10872981.2021.1946237

- Spencer E, Ambinder D, Christiano C, et al. Finding the next resident physicians in the COVID-19 global pandemic: an applicant survey on the 2020 virtual urology residency match. Urology. 2021;157:44-50. doi:10.1016/j.urology.2021.05.079

- Seifi A, Mirahmadizadeh A, Eslami V. Perception of medical students and residents about virtual interviews for residency applications in the United States. PLoS One. 2020;15(8):e0238239. doi:10.1371/journal.pone.0238239

- Snyder MH, Reddy VP, Iyer AM, et al; Society of Neurological Surgeons and American Association of Neurological Surgeons Young Neurosurgeons Committee. Applying to residency: survey of neurosurgical residency applicants on virtual recruitment during COVID-19. J Neurosurg. 2021;1-10. doi:10.3171/2021.8.JNS211600

- Taylor M, Freeman K, Mehaffey J, Wallen T, Okereke I. Applicant perception of virtual interviews in cardiothoracic surgery: a thoracic education cooperative group study. J Thorac Cardiovasc Surg. 2021;S0022-5223(21)01702-5. doi:10.1016/j.jtcvs.2021.11.074.

- Ding JJ, Has P, Hampton BS, Burrell D. Obstetrics and gynecology resident perception of virtual fellowship interviews. BMC Med Educ. 2022;22(1):58. doi:10.1186/s12909-022-03113-3

- Gore J, Porten S, Montgomery J, et al. Applicant perceptions of virtual interviews for society of urologic oncology fellowships during the COVID-19 pandemic. Urol Oncol. Seminars and Original Investigations. 2021;S1078-1439(21)00261-1. doi:10.1016/j.urolonc.2021.06.003.

- Current Trends in Medical Education. Association of American Medical Colleges. Accessed April 1, 2022. https://www.aamcdiversityfactsandfigures2016.org/report-section/section-3/

- 2018 Electronic Residency Application Service Data. Association of American Medical Colleges. 2018. Accessed March 22, 2022. https://www.aamc.org/system/files/reports/1/all.pdf

There are no comments for this article.