Background and Objectives: Clinical coaching programs can improve clinician performance through feedback following direct observation and the promotion of reflection. This study assessed the feasibility and acceptability of a primary care coaching program applied in community-based practices.

Methods: Using a 31-item behavioral checklist that was iteratively revised, four faculty observed 18 community-based primary care clinicians (15 of whom were physicians) across 36 patient encounters. Each behavior was scored as a binary variable (observed or not observed). After watching them care for patients, each clinician participated in a focused feedback session to discuss strengths and areas for improvement.

Results: Behaviors observed with the highest frequency were: reflects compassion (100%), appears to enjoy caring for the patient (100%), leads and follows with open-ended questions (97%), and asks thoughtful and smart questions (95%). Areas for improvement were those behaviors done less commonly: apologizes for running behind schedule (18%), acknowledges computer and/or explains role in patient care (14%), and assesses understanding (teachback; 7%). Most clinicians agreed or strongly agreed that they would like to be coached again in the future (81%), and that the coaching feedback would help them become more effective in primary care practice (94%). Nearly all patients surveyed substantiated that it did not bother them to have another doctor in the room and that it is a good idea to offer coaching to clinicians to help them improve.

Conclusions: Coaching busy primary care clinicians is feasible and a valued experience. Focusing on specific observable behaviors can identify clinicians’ strengths and opportunities for improvement. Patients are pleased to learn that their clinicians are receiving coaching as part of their professional development.

During medical school and residency training, there are educational methods and curricula aimed at promoting professional growth and development toward clinical competence. While readings, didactics, and shadowing of skilled clinicians can be helpful, many believe that structured observation of one’s performance by an experienced clinician educator who can offer feedback, guidance—also known as coaching—and advice to stimulate reflection on action1 are the learning strategies with the most impact. Trainees who are committed to clinical excellence both value and seek out this type of guidance. However, after completing residency training there are even fewer opportunities to be observed by trusted and respected individuals who are able to push them toward masterful practice. Consequently, clinical performance frequently declines over time after training is completed.2 Traditional professional development programs may not adequately facilitate continuing maturation toward maintaining clinical excellence.3 Therefore innovative methods that truly promote maturation and growth are needed.

The attainment of expert performance or mastery requires repetitive practice for many hours, thoughtful self-assessment with careful consideration about improvement strategies, and high-quality specific feedback based on structured observations from insightful observers.1 These elements are the foundational components of deliberate practice.1 Elite athletes and musicians rely on deliberate practice concepts to realize and maintain exceptional performance.4,5 They employ coaches who set rigorous practice schedules, observe technique, and provide structured feedback highlighting both strengths and weaknesses. Despite its potential to improve performance, coaching is underutilized in medicine.6,7

The practice of medicine in any field is an evolving challenge because expertise and mastery are extremely difficult to attain and sustain.2,8 Clinicians in training, and those early in their careers, may receive clinical mentoring from senior clinician role models in which they are generally supported and guided toward practice norms and ideal behaviors. Clinical coaching differs from mentoring in that the clinician and the coach collaboratively create attainable goals that are informed by direct observation by the coach, and self-assessment as well as in-depth reflection by the clinician. The basis for this longitudinal relationship is to facilitate the clinician’s efficient improvement toward a sustained mastery of clinical skills.9 Clinical coaching programs, aiming to augment performance, have been studied in several clinical disciplines including hospital medicine, radiology, and surgery.10,11,12 Coaching in primary care is particularly critical because clinicians in these disciplines see patients behind closed doors and there is limited interaction or collaboration with colleagues who may be well-positioned to offer feedback. The objective of this study was to assess the feasibility and acceptability of a primary care coaching program applied in community-based practices.

Setting and Context

This pilot program was implemented between January and March of 2016 at two community-based primary care sites in Maryland that are affiliated with Johns Hopkins University School of Medicine. Both of these sites serve the surrounding communities by providing primary care to children and adults in patient-centered medical homes with physicians, nurse practitioners (NP), and physician assistants (PA).

Coaching Intervention

Clinicians at these two sites were introduced to the project at a staff meeting in which the benefits of coaching were introduced along with the goals and objectives of this study. They were reassured that this was exclusively a quality improvement initiative intended to optimize their performance, that the assessments were formative, and that their individualized performance data would not be shared with anyone. Then, all clinicians were invited to sign up to be coached by one of five members of the Johns Hopkins Miller-Coulson Academy of Clinical Excellence, all of whom were experienced in clinical coaching, had participated in the intervention design, and had completed a 9-month teaching skills course prior to the intervention.13 Of the 25 potential individuals who were eligible to be coached, 18 (72%) elected to participate.

On a mutually agreed-upon date when the primary care clinician had patients scheduled, the coach came to watch the participant. This was usually arranged for either a half-day morning or afternoon session, so that the coach and clinician could meet and debrief after the last patient was seen. Coaching sessions were structured to maximize the known benefits of direct observation.14 For consistency and to maximize focus on specific features, coaches used a checklist of behaviors. The items on the checklist were based on the published tenets of clinical excellence that had previously been developed for coaching of both faculty clinicians and residents in hospital in-patient settings.15,16 Earlier teams that developed the observation sheets made refinements and performed pilot testing. We amended the checklist to include other practical considerations related to contemporary primary care (eg, electronic medical record [EMR] and value-based care).17,18 The checklist was divided into five domains relevant to the ambulatory setting: professionalism and humanism, communication and interpersonal skills, use of EMR, physical examination, and holistic and value-based care. Each of the five domains contained at least three behaviors to be assessed on the checklist. The initial and revised checklists’ domains were designed with consideration of the conceptual frameworks of reflective practice, self-determination theory, lifelong learning, and goal setting.16 Two versions of the observation sheet were adapted for ambulatory outpatient practice—one each for pediatric and adult encounters.

All behaviors on the checklist were scored by the coach as a binary variable (observed or not observed) with the coach adding details for feedback in a notes column. Each coach performed several practice coaching sessions with clinicians from other practices (not part of the study) to gain familiarity with the checklist. They also practiced using it to facilitate feedback discussions. From these practice runs, we realized that the behavioral checklist’s 40 items could be condensed to 31 items for use in the ambulatory setting. The clinicians who were coached during these practice runs provided the research team with feedback about the behaviors included on the checklist, and the prompts that were to be used to facilitate reflection and discussion. During these practice coaching sessions, each coach was encouraged to reflect on some of the best and worst coaches that they have worked with across different settings, and what things to do and avoid when coaching. This iterative practice, with both feedback from clinicians and discussions among the coaches, formed the basis of the training for the coaches (who were all formally trained educators) for this study. We did not test correlation of observations between coaches, partly because no two coaches worked with or observed the same clinician.

Formative Assessment and Program Evaluation

After the approximately 90 minutes of observing each participating clinician during patient encounters, the coaches met with each person for approximately 30 minutes to give feedback about the encounters, focusing on strengths and areas for improvement. The coaching feedback discussions began with self-assessments by the clinicians—first about their overall performance and then specifically around areas where the clinicians performed best and worst. Next, using data gleaned from the behavioral checklist, the coaches delivered advice and commentary informed by specific, concrete examples of clinician behavior and language used during the patient encounters. Particular emphasis was given to items where clinicians’ initial self-assessment differed from faculty observed assessment. Patients were not present for these feedback discussions.

After the feedback session, each clinician wrote down two changes that they hoped to implement after being coached. Additionally, each patient who was seen in the presence of the coach was given a two-question survey card to ascertain their perspectives about having their primary care clinician coached. The questions were: “Did it bother you at all to have another doctor in the room as part of a quality improvement project?” and “Is it a good idea to offer coaching to providers to help them to improve and to be the best that they can be?” Finally, after coaching sessions were completed, clinicians were sent a seven-question survey (with 5-point Likert scale response options of strongly agree, agree, neither agree nor disagree, disagree, strongly disagree) rating the acceptability and usefulness of coaching. The survey prompts were: “I felt comfortable being coached,” “The feedback reminded me of strengths that I have in caring for my patients,” “The feedback pointed out some weaknesses or things that I will try to do differently,” “I became aware of blind spots or surprises that I was not previously aware of,” “I will incorporate new approaches and behaviors into my practice”, “The coach’s feedback may help me to be a better primary care physician for my patients,” and “I would like to be coached in the future.” Our qualitative method analysis identified themes by comparing and contrasting survey responses.

The study was approved by the Johns Hopkins’ Institutional Review Board. Data analysis was performed with STATA version 9.0 (StataCorp LP, College Station, TX). Frequencies and simple means were calculated for each variable, where appropriate. Qualitative comments were collected and organized by thematic domains.

A total of 18 primary care clinicians were directly observed during the study period. Sixty-one percent (11/18) were female clinicians; 17% (3/18) were not physicians; two were nurse practitioners, and one was a physician assistant. Eleven of the clinicians were observed at site A (11/18, 61%), and 39% (seven of the 18 clinicians) were coached at site B. Eight of the clinicians (44%) were coached while caring for pediatric patients, and the remaining 10 (56%) were observed during their care of adults. Observation checklist sheets were completed for 36 patient encounters; 14 were pediatric and 22 were adult patients.

Observed Behaviors

During the adult encounters, the observers estimated that the clinician spoke less than half of the conversing time (mean: 42%, standard deviation: 16%, range: 25%-80%). Observed strengths were: reflects compassion using verbal acknowledgement and nonverbal facial/body cues (100%), appears to enjoy talking with the patient (100%), leads and follows with open-ended questions (100%), asks engaging questions (100%), and listens attentively (100%; Table 1). Behaviors from the checklist that were performed with the lowest frequency were: engages the family (50%), demonstrates culturally sensitive care (50%), directing position of computer screen so patient can view (40%), apologizes for running behind schedule when applicable (29%), and assesses understanding using teachback (0%).

For the pediatric patient encounters, the clinicians spoke for about half of the time (mean: 51%, standard deviation: 12%, range: 30%-70%). Observed strengths were: reflects compassion using verbal acknowledgement (100%) and nonverbal facial/body cues (100%), appears to enjoy caring for the patient (100%), positions self to facilitate communication (100%), allows parent/patient to talk (100%), and listens attentively (100%). The behaviors conducted with the lowest frequency were: demonstrates culturally sensitive care (42%), apologizes for running behind schedule when applicable (7%), and acknowledges computer and/or explains role in patient care (0%).

Intervention Acceptability

All 18 clinicians completed the post-intervention assessment. One-hundred percent of clinicians agreed that the coaching feedback reminded them of strengths in caring for their patients and all agreed that coaching feedback highlighted some weaknesses. Eighty-one percent agreed or strongly agreed that they would like to be coached in the future, while 94% agreed or strongly agreed that the coaching feedback would help them be more effective primary care clinicians for their patients. Only 4 (22%) were not convinced that coaching made them aware of unseen clinical deficits, and one clinician indicated feeling somewhat uncomfortable with the coaching.

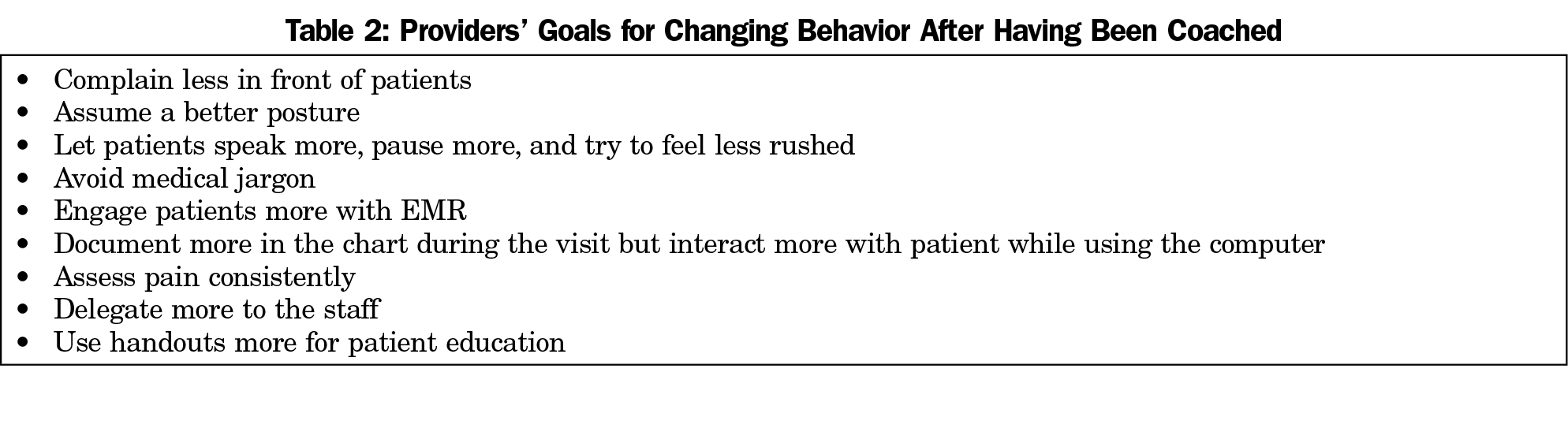

Goals Emerging From Coaching Intervention

Each of the 18 coached clinicians listed two goals. These 36 brief goals emerged from the discussions and reflections about the coaching session. Nine goals were mentioned repeatedly by at least two different providers (Table 2). The majority of these were related to communication skills and optimal use of the EMR.

Patient Perspective

Of the 36 patients or parents surveyed, 97% stated that it did not bother them to have another doctor in the room as part of this coaching quality improvement project. Ninety-four percent of patients and parents agreed that it is a good idea to offer coaching to clinicians to help them improve.

Coaching is a core element of deliberate practice and it is believed to be necessary for the attainment of mastery. Our pilot study shows that coaching of primary care clinicians is both feasible and valuable. This study’s results highlight the acceptance of coaching as a tool that both reminds primary care clinicians of their strengths in caring for their patients and that alerts them to areas needing attention. Patients in primary care practices were not only amenable to the coaching of their clinicians during visits, but viewed the experience positively and seemed pleased with their primary care clinician’s commitment to improvement.

While many studies in coaching have been done in the hospital environment19 and focused on trainees,19 few have targeted practicing primary care clinicians. A motivational interviewing training program that incorporated peer coaching, workshops, and self-study was compared to the same elements without coaching in a primary care setting.20 The addition of peer coaching led to increased knowledge, skills, and confidence in motivational interviewing.11 However, this study utilized simulated patient telephone interviews, and did not take place in the context of actual patient encounters in busy primary care practices, as was the case in our study. Another coaching program focused on remediation of communication skills in struggling clinicians (mainly internists) demonstrated satisfaction among clinicians and their supervisors.21 However, unlike our study, the coaching did not take place in clinicians’ actual work environments, was done by an outside consultant, and was not focused on directly observed behaviors from patient encounters.

Prior studies have demonstrated that coaching reduces surgical errors22-24 and improves technical skill acquisition.21,22,25,26 However, a recent review of the literature has identified a gap in evaluating the effects of coaching on nontechnical skills including those commonly prioritized in primary care, such as communication.19 After being coached, primary care clinicians recognized a need to improve on their communication and interactions with the EMR. While the EMR affords clinicians with unique opportunities, it may also be cumbersome and inefficient27 and become a distractor that can lead to unintentional negative body language cues and decreased eye contact with patients.28 Ultimately, effective balance of attention between the patient and the EMR requires thoughtful practice as it has been shown to increase patient visit duration in this time-pressured environment.29,30,31

Several limitations of this pilot study should be considered. First, while the coaching program took place in a number of primary care practices, these practices are all part of one health system and the results may not be generalizable to all settings. Second, the coaches selected were all physicians and members of a peer-selected academy of clinical excellence. Their previous clinical coaching experience may have contributed to the intervention’s value and this may be difficult to replicate without significant coach training. Finally, the recruitment effort appealed to all clinicians but only a subset volunteered to be coached; this group may have been more receptive and motivated to grow.

Our study highlights the feasibility of implementing an observation-based coaching program within the natural patient flow of busy primary care practices. Primary care clinicians and patients were comfortable with the coaching program. Next steps in the study of clinician coaching in primary care would be to conduct longitudinal studies that examine the impact of coaching on the clinicians’ behavioral changes in their practice.

Acknowledgments

D. Wright is the Anne Gaines and G. Thomas Miller Professor of Medicine, which is supported through the Johns Hopkins’ Center for Innovative Medicine.

Funding Statement: This study was supported by the Johns Hopkins Osler Center for Clinical Excellence.

References

- Ericsson KA. Deliberate practice and acquisition of expert performance: a general overview. Acad Emerg Med. 2008;15(11):988-994. https://doi.org/10.1111/j.1553-2712.2008.00227.x

- Elder A. Clinical skills assessment in the twenty-first century. Med Clin North Am. 2018;102(3):545-558. https://doi.org/10.1016/j.mcna.2017.12.014

- Thorn PM, Raj JM. A culture of coaching: achieving peak performance of individuals and teams in academic health centers. Acad Med. 2012;87(11):1482-1483. https://doi.org/10.1097/ACM.0b013e31826ce3bc

- Fransen K, Boen F, Vansteenkiste M, Mertens N, Vande Broek G. The power of competence support: the impact of coaches and athlete leaders on intrinsic motivation and performance. Scand J Med Sci Sports. 2018;28(2):725-745. https://doi.org/10.1111/sms.12950

- Davidoff F. Music lessons: what musicians can teach doctors (and other health professionals). Ann Intern Med. 2011;154(6):426-429. https://doi.org/10.7326/0003-4819-154-6-201103150-00009

- Gazelle G, Liebschutz JM, Riess H. Physician burnout: coaching a way out. J Gen Intern Med. 2015;30(4):508-513. https://doi.org/10.1007/s11606-014-3144-y

- Sekerka LE, Chao J. Peer coaching as a technique to foster professional development in clinical ambulatory settings. J Contin Educ Health Prof. 2003;23(1):30-37. https://doi.org/10.1002/chp.1340230106

- Choudhry NK, Fletcher RH, Soumerai SB. Systematic review: the relationship between clinical experience and quality of health care. Ann Intern Med. 2005;142(4):260-273. https://doi.org/10.7326/0003-4819-142-4-200502150-00008

- Deiorio NM, Carney PA, Kahl LE, Bonura EM, Juve AM. Coaching: a new model for academic and career achievement. Med Educ Online.https://doi.org/10.3402/meo.v21.33480. eCollection 2016.

- Iyasere CA, Baggett M, Romano J, Jena A, Mills G, Hunt DP. Beyond continuing medical education: clinical coaching as a tool for ongoing professional development. Acad Med. 2016;91(12):1647-1650; epub ahead of print. https://doi.org/10.1097/ACM.0000000000001131

- Green DE, Maximin S. Professional coaching in radiology: practice corner. Radiographics. 2015;35(3):971-972. https://doi.org/10.1148/rg.2015140306

- Greenberg CC, Dombrowski J, Dimick JB. Video-based surgical coaching. An emerging approach to performance improvement. JAMA Surg. 2016;151(3):282-283. https://doi.org/10.1001/jamasurg.2015.4442

- Cole KA, Barker LR, Kolodner K, Williamson P, Wright SM, Kern DE. Faculty development in teaching skills: an intensive longitudinal model. Acad Med. 2004;79(5):469-480. https://doi.org/10.1097/00001888-200405000-00019

- Holmboe ES, Hawkins RE, Huot SJ. Effects of training in direct observation of medical residents’ clinical competence: a randomized trial. Ann Intern Med. 2004;140(11):874-881. https://doi.org/10.7326/0003-4819-140-11-200406010-00008

- Christmas C, Kravet SJ, Durso SC, Wright SM. Clinical excellence in academia: perspectives from masterful academic clinicians. Mayo Clin Proc. 2008;83(9):989-994. https://doi.org/10.4065/83.9.989

- Rassbach CE, Blankenburg R. A novel pediatric residency coaching program: Outcomes after one year. Acad Med. 2017:Mar;93(3):430-434.

- Olayiwola JN, Rubin A, Slomoff T, Woldeyesus T, Willard-Grace R. Strategies for primary care stakholders to improve electronic health records (EHRs). J Am Board Fam Med. 2016;29(1):126-134. https://doi.org/10.3122/jabfm.2016.01.150212

- Shrank WH. Primary care practice transformation and the rise of consumerism. J Gen Intern Med. 2017;32(4):387-391. https://doi.org/10.1007/s11606-016-3946-1

- Lovell B. What do we know about coaching in medical education? A literature review. Med Educ. 2018;52(4):376-390. https://doi.org/10.1111/medu.13482

- Fu SS, Roth C, Battaglia CT, et al. Training primary care clinicians in motivational interviewing: a comparison of two models. Patient Educ Couns. 2015;98(1):61-68. https://doi.org/10.1016/j.pec.2014.10.007

- Egener B. Addressing physicians’ impaired communication skills. J Gen Intern Med. 2008;23(11):1890-1895. https://doi.org/10.1007/s11606-008-0778-7

- Cole SJ, Mackenzie H, Ha J, Hanna GB, Miskovic D. Randomized controlled trial on the effect of coaching in simulated laparoscopic training. Surg Endosc. 2014;28(3):979-986. https://doi.org/10.1007/s00464-013-3265-0

- Bonrath EM, Dedy NJ, Gordon LE, Grantcharov TP. Comprehensive surgical coaching enhances surgical skill in the operating room. Ann Surg. 2015;262(2):205-212. https://doi.org/10.1097/SLA.0000000000001214

- Hu YY, Peyre SE, Arriaga AF, et al. Postgame analysis: using video-based coaching for continuous professional development. J Am Coll Surg. 2012;214(1):115-124. https://doi.org/10.1016/j.jamcollsurg.2011.10.009

- Hu YY, Mazer LM, Yule SJ, et al. Complementing operating room teaching with video-based coaching. JAMA Surg. 2017;152(4):318-325. https://doi.org/10.1001/jamasurg.2016.4619

- Liao WC, Leung JW, Wang HP, et al. Coached practice using ERCP mechanical simulator improves trainees’ ERCP performance: a randomized controlled trial. Endoscopy. 2013;45(10):799-805. https://doi.org/10.1055/s-0033-1344224

- Gawande A. Why Doctors Hate their Computers. New Yorker. 2018.

- Saleem JJ, Flanagan ME, Russ AL, et al. You and me and the computer makes three: variations in exam room use of the electronic health record. J Am Med Inform Assoc. 2014;21(e1):e147-e151. https://doi.org/10.1136/amiajnl-2013-002189

- Frankel R, Altschuler A, George S, et al. Effects of exam-room computing on clinician-patient communication: a longitudinal qualitative study. J Gen Intern Med. 2005;20(8):677-682. https://doi.org/10.1111/j.1525-1497.2005.0163.x

- Hsu J, Huang J, Fung V, Robertson N, Jimison H, Frankel R. Health information technology and physician-patient interactions: impact of computers on communication during outpatient primary care visits. J Am Med Inform Assoc. 2005;12(4):474-480. https://doi.org/10.1197/jamia.M1741

- Lafata JE, Shay LA, Brown R, Street RL. Office‐based tools and primary care visit communication, length, and preventive service delivery. Health Serv Res. 2016;51(2):728-745. https://doi.org/10.1111/1475-6773.12348

There are no comments for this article.