Introduction: A core principle of family medicine is information mastery, or application of principles of evidence-based medicine in clinical practice. While information mastery teaching and assessment are beginning to permeate postgraduate family medicine training programs, and while exciting literature on new open resource assessment methods is emerging, there are no prior descriptions of examinations that specifically assess medical students’ information mastery competency.

Methods: To test information mastery competency, a novel final exam for the family medicine clerkship was developed, implemented, and evaluated. During the timed exam, the competency-based information mastery assessment (IMA) requires students to look up evidence-based information using web resources to answer case-based questions. Exam feasibility was tested with pilot examinees whose reactions were gauged. Student performance on the traditional closed book knowledge assessment (KA) was compared with performance on the open internet IMA. Exam performance was compared with preceptor ratings of students’ clinical performance. Low performers were further analyzed for preceptors’ ratings of specific student skills in information mastery and self-directed learning.

Results: An open internet IMA testing knowledge application and information mastery skills is not only feasible but can also be educational. Student performance scores on the open internet IMA do not differ from scores on the closed book KA. Students describe many positive features of this open internet IMA. Student performance on the competency-based IMA correlates with clinical ratings by preceptors and with preceptors’ judgment of information mastery skills.

Conclusions: A novel approach to assessment in family medicine clerkships may be used to assess student competency in information mastery. Further research is needed for enhanced exam validation.

In today’s clinical practice, the ability to find the right answer to a point-of-care question is more important than memorizing facts destined to change. A curriculum in information mastery,1 the application of evidence-based medicine in clinical practice, can improve residents’ abilities in this area2 but current literature lacks formal study of medical students’ information mastery competency.

Many medical schools measure knowledge after the family medicine clerkship via the National Board of Medical Examiners (NBME) subject exam. This exam, a multiple-choice, knowledge-based assessment rather than a competency-based assessment, is not designed to evaluate ability to render real-time clinical judgments using web resources. Open resource exam proponents argue that alternative tests can be particularly valuable in assessing higher levels of learning, eg, application rather than rote memorization.3

Current evidence on open resource exams is mixed. In one study, medical students who took an open book exam had slightly higher test scores, deeper understanding, and reduced anxiety compared to closed book examinees.4 Similarly, college students preferred open resource exams, reported less anxiety, and performed slightly better5 or no worse6 than closed book examinees. A systematic review of 37 studies (mostly on college students) conversely found better performance, but also more preparation time, with closed book exams.3 Although some students prefer open book tests, their study habits can be disorganized7 and performance may vary.8 All eight systematically reviewed studies that pertained specifically to medical students used knowledge-based multiple-choice question exams, not case-based questions requiring synthesis of material retrieved from medical systematic reviews.3

Hence current research on open resource exams discusses new ways to assess students in an era of quickly changing information, yet it does not specifically address competency assessments of information mastery. The present study adds to the literature by describing the development, implementation and evaluation of a new open-internet medical information mastery competency assessment tool.

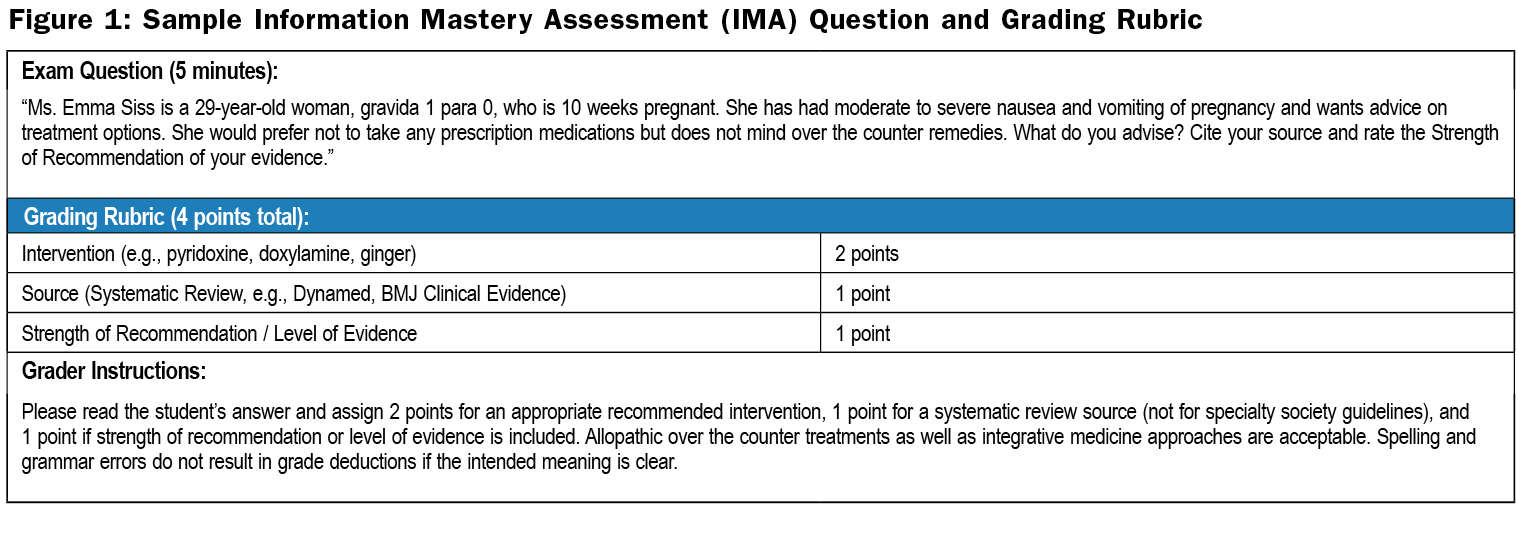

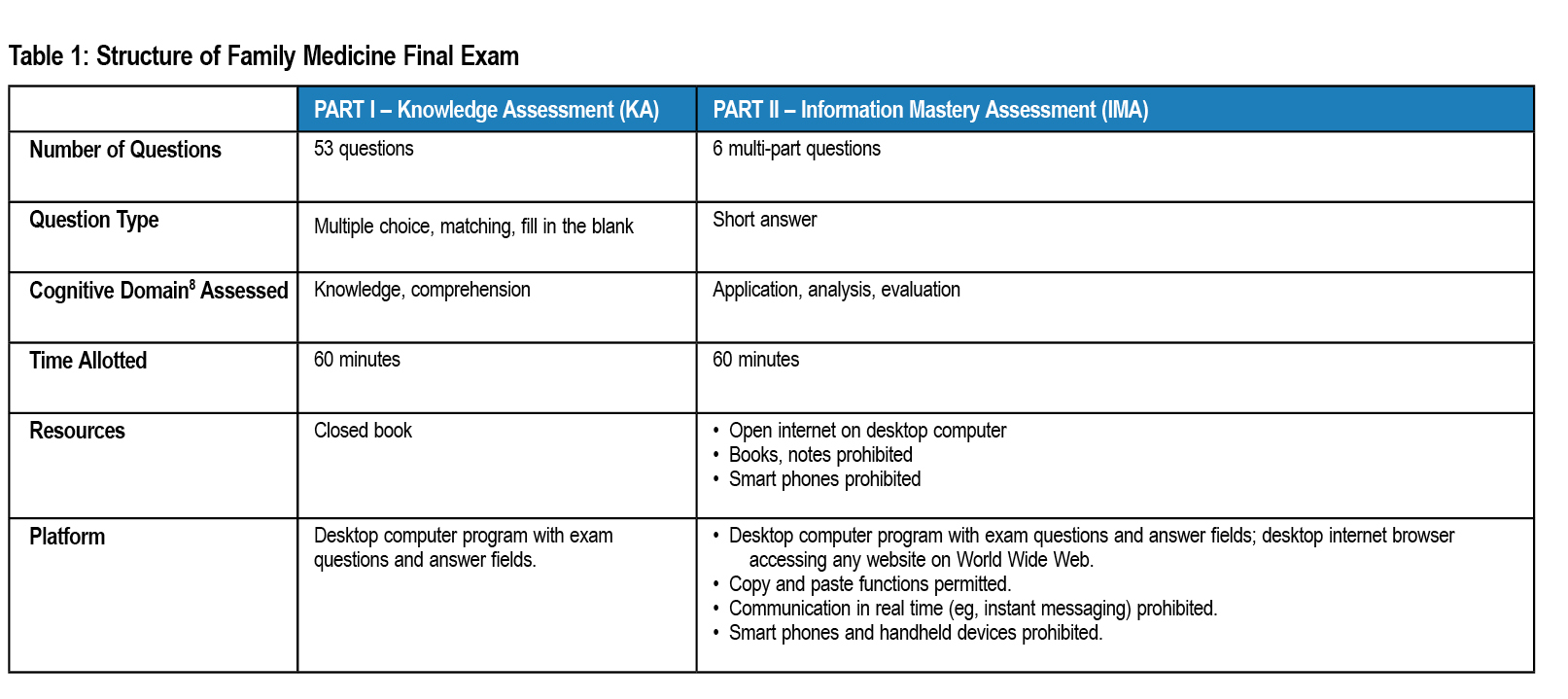

At Tufts University School of Medicine, third-year family medicine clerkship students took a computer-based final exam comprising two parts: a closed book knowledge assessment (KA), and a timed open internet information mastery assessment (IMA). The latter requires students to efficiently access online information in order to answer clinical questions (exam details in Table 1), testing higher-order cognitive skills9. For each case scenario, examinees provide a clinical recommendation, cite a source of evidence, and identify a strength of recommendation rating10 (sample question in Figure 1).

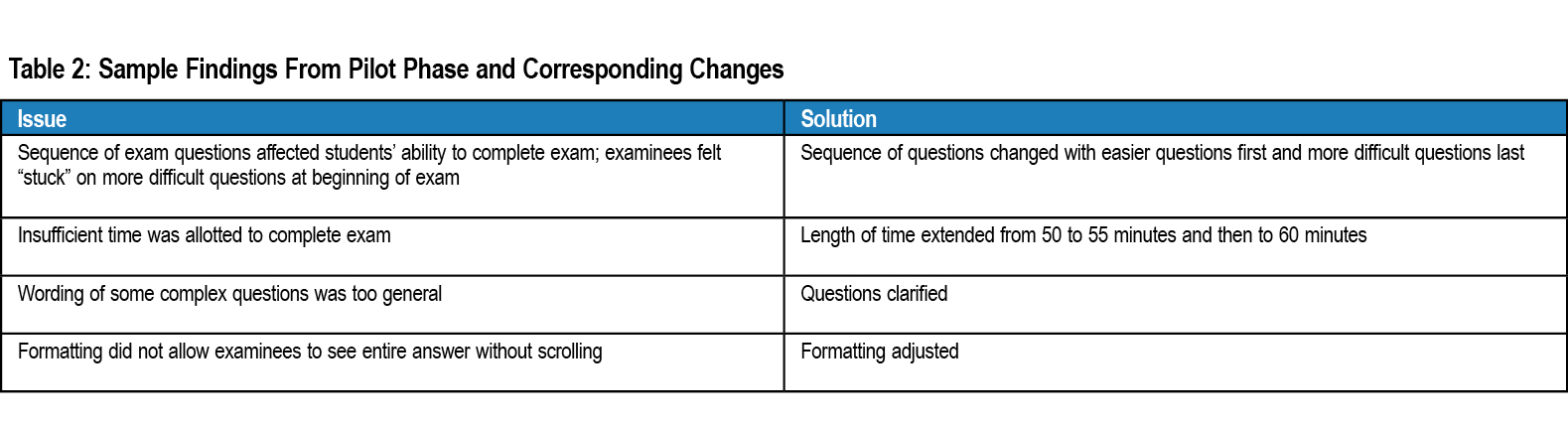

A pilot phase tested feasibility. An early draft of the open internet IMA was administered to two students, one who had taken Family Medicine (whose curriculum includes an information mastery workshop) and one who had not. Based on their feedback, a revised IMA version was administered (no credit) to two separate groups of eight students apiece who had previously passed the family medicine clerkship. Pilot examinees gave feedback on format, time, clarity and question sequencing. Their cognitive interview transcripts were subjected to standard qualitative analysis methodology to extract themes, which informed modifications to the IMA (Table 2).

Both parts of the updated exam version (KA and IMA) were then administered for high-stakes credit to 599 third-year students enrolled in the mandatory 6-week family medicine clerkship over three years. Technical issues and timing were further monitored during the live study phase.

Scores from the closed book KA and open internet IMA were compared using paired Student's t-tests.

Additionally, exam scores were compared with clinical performance grades rendered by preceptors (family physicians hosting students in their clinical practices). Preceptors assess students using Likert (1-4) ratings of domains such as medical knowledge and self-directed learning, and also select an overall clinical grade from five levels: honors, high pass, pass, low pass and fail.

In the present study, the open internet IMA tests competency, or the achievement of a minimum skill level in a criterion-referenced (not normative) manner. Students earning higher clinical performance grades exceed expectations, reaching well above minimum required competency. Therefore, to capture data on students not meeting competency, clinical grades–grades rendered by preceptors based on clinical performance, not on exam score–in the lowest three categories (pass, low pass and fail) were compared with IMA performance.

In this low-performing group, preceptors’ Likert scale (1-4) ratings of student skills in information mastery and self-directed learning (skills related to self recognition of need to access point-of-care information) were also analyzed.

This study was approved by the Institutional Review Board of Tufts Medical Center.

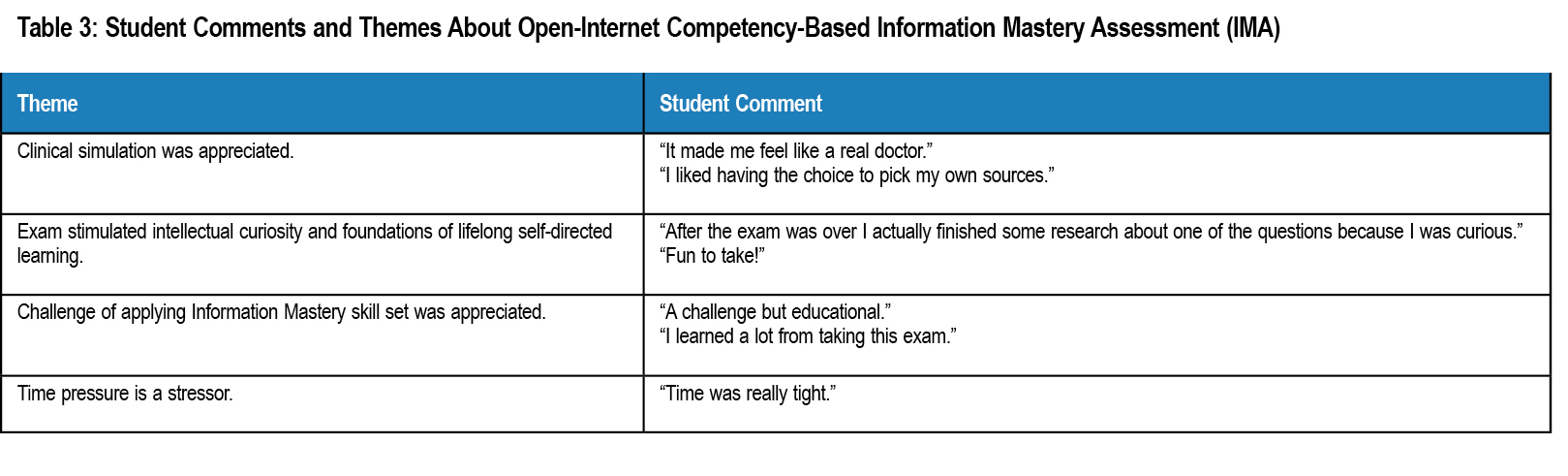

Regarding feasibility, the exam was successfully administered to 599 students over 3 years. Over 90% of students completed the exam in the allotted time, and all demonstrated ability to use web resources to answer clinical questions. Cognitive interview data on reactions to the open internet IMA revealed student concern about time pressure, but otherwise showed an appreciation of the higher-order skills tested (Table 3).

Regarding performance, the open internet IMA mean score of 81% was not significantly different from the closed book KA mean score of 82% (t-value 0.47, NS). Half of students earning poor clinical grades from preceptors scored below average on the closed book KA, but two-thirds of these students scored below average on the open internet IMA. Within this group, preceptors gave 95% of these low-performing students poor Likert (1-2 of 4) ratings specifically in the subsection area of information mastery and 65% poor ratings in self-directed learning. In addition to their low quantitative (Likert) ratings in these two domains, preceptors’ written descriptions of low-performing examinees commented on poor synthesis and application of knowledge, and weak self-directed learning skills.

An open internet family medicine clerkship final exam testing application of knowledge and information mastery skills is feasible. Unlike in prior literature on closed book versus open resource exams,3 students in the present study performed as well on open internet competency-based exam questions as on closed book knowledge-based questions. They also appreciated the higher-order skills and simulation of clinical practice. Notably, the comment that this exam is “fun to take” is not typically heard after traditional exams.

IMA scores and clinical grades were correlated; both assessment tools identified low performers. By observing students in the clinical setting over 6 weeks, preceptors frequently identified students lacking higher-order knowledge synthesis and application skills; likewise, the IMA detected the same students.

One study weakness is the need for detailed exam validation. Further validity research will use item analysis and comparison of high versus low performers. While it would be straightforward to compare this IMA with standardized tests such as FM Cases or NBME “shelf” exam, the latter are knowledge assessments. Better competency-based comparators would be clinical performance scores across all required clerkships, high stakes Objective Structured Clinical Exam (OSCE) scores, or residency director evaluations of medical school graduates after PGY-1.

As in most education research, the most compelling outcomes are the hardest to measure. Although students endorsed learning from the IMA, it remains unknown whether the exam corresponds with long-term retention. If validated, this kind of exam should be used more broadly to assess critical skills not currently tested in other parts of the curriculum.

The present findings are important because in an age of internet-based knowledge evolution, physicians and medical trainees must be competent at quickly accessing, synthesizing, and applying ever-updating information to make clinical decisions. Some argue that today’s information explosion mandates that evaluation of physicians’ practice include assessment of this ability, which can be done via open resource exams.3 Some specialty boards are considering changing recertification assessment methods to better align with higher-order skills needed in clinical medicine. Likewise in medical schools, an exam such as the present IMA might even replace knowledge-based tests. Because assessment drives learning,11 we should not only discuss concepts of information mastery with our learners, but also assess their skills in providing quality patient care based on the most current information available. After all, as we tell our students, life itself is open book.

Acknowledgments

Presented at the Society of Teachers of Family Medicine Conference on Medical Student Education, Phoenix Arizona, January 2016.

References

- Slawson DC, Shaughnessy AF, Bennett JH. Becoming a medical information master: feeling good about not knowing everything. J Fam Pract. 1994;38(5):505-513.

- Shaughnessy AF, Gupta PS, Erlich DR, Slawson DC. Ability of an information mastery curriculum to improve residents' skills and attitudes. Fam Med. 2012;44(4):259-264.

- Durning, SJ, Dong T, Ratcliffe T, et al. Comparing open-book and closed-book examinations: a systematic review. Acad Med. 2016;91(4).

https://doi.org/10.1097/ACM.0000000000000977

- Broyles I, Cyr P, Korsen N. Open book tests: Assessment of academic learning in clerkships. Med Teach. 2015;27(5):456-462.

https://doi.org/10.1080/01421590500097075

- Gharib A, Phillips W, Mathew N. Cheat sheet or open-book? a comparison of the effects of exam types on performance, retention, and anxiety. Psych Research. 2012;2(8):469-478. http://eric.ed.gov/?id=ED537423

- Mathew N, Gharib A, Phillips W. Student preferences and performance: a comparison of open-book, closed book, and cheat sheet exam types. Proceedings of The National Conference On Undergraduate Research (NCUR) Utah: Weber State University, March 2012. www.ncurproceedings.org/ojs/index.php/NCUR2012/article/view/238

- Karagiannopoulou E, Milienos FS. Exploring the relationship between experienced students' preference for open- and closed-book examinations, approaches to learning and achievement. Educ Research and Evaluation. 2013;19(4):271-296.

https://doi.org/10.1080/13803611.2013.765691

- Block R. Discussion of the effect of open-book and closed-book exams on student achievement in an introductory statistics course. PRIMUS. 2012;22(3):228-238.

https://doi.org/10.1080/10511970.2011.565402

- Bloom BS, Engelhart MD, Furst EJ, Hill WH. Taxonomy of educational objectives: The classification of educational goals. Handbook I: Cognitive domain. New York: David McKay Company; 1956.

- Ebell M, Siwek J, Weiss B, et al. Strength of recommendation taxonomy (SORT): a patient-centered approach to grading evidence in the medical literature. Am Fam Physician. 2004;69(3):548-556.

https://doi.org/10.3122/jabfm.17.1.59

- McLachlan JC. The relationship between assessment and learning. Med Educ. 2006;40(8):716–717.

https://doi.org/10.1111/j.1365-2929.2006.02518.x

There are no comments for this article.